Model-Based FPGA and ASIC Design in the Context of Functional Safety

Overview

In addition to ASICs, FPGAs and SoCs are playing an increasing role across a growing number of systems and applications due to their unique attributes of flexibility, high throughput, low latency and per watt performance.

A key success factor in multidisciplinary FPGA, SoC and ASIC projects is efficient communication and collaboration across teams as well as early validation and verification of the design.

Quickly reacting to changing requirements in dynamic business environments such as autonomous and robotic systems is a significant competitive advantage.

Functional safety requirements impose an additional challenge in automotive, medical, railway and other industries.

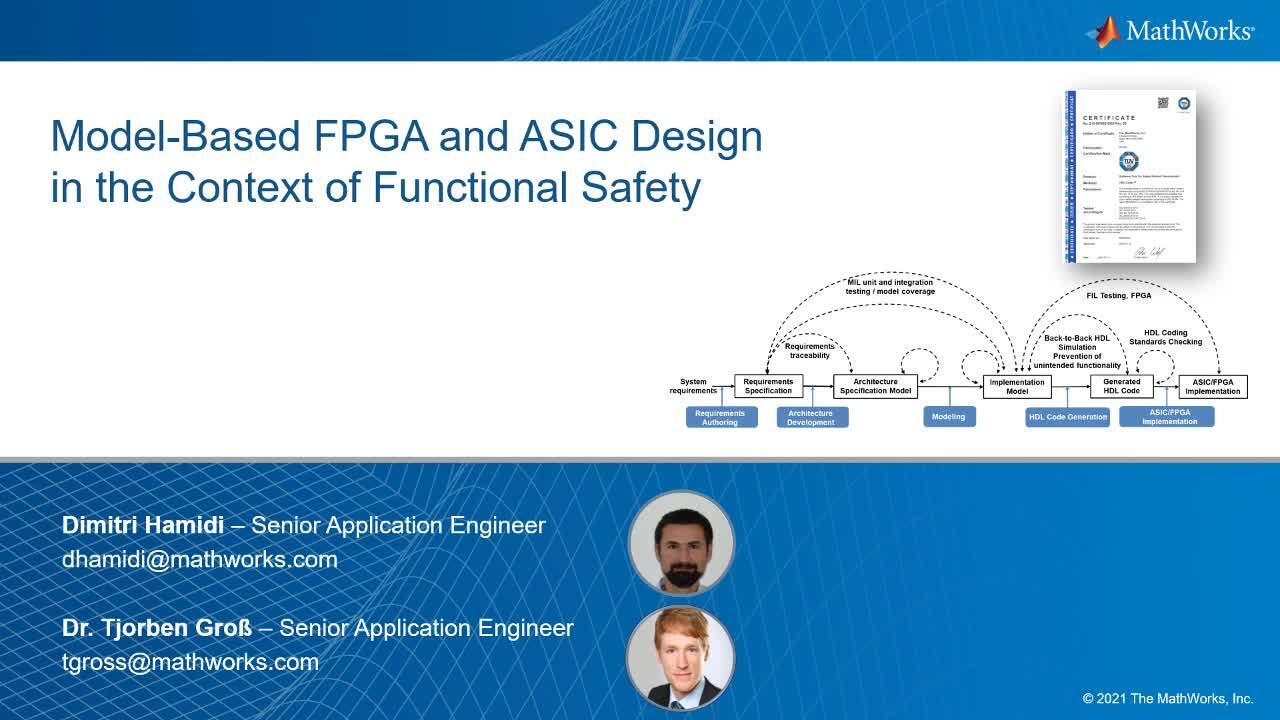

In this webinar we discuss an integrated workflow for designing and implementing signal processing, control and vision algorithms on FPGAs, ASICs and SoCs while addressing the above listed challenges. We briefly cover the process from requirements authoring, to architectural modeling, go over modeling for implementation, then to HDL code generation, with verification and validation at each step. We show how the integrated Model-Based Design toolchain helps you streamline compliance with functional safety standards such as ISO 26262, IEC 61508 or IEC 62304.

Highlights

- Benefits of model-based design for FPGA and ASIC

- A reference workflow for streamlining certification including:

- system and hardware architecture modeling

- model verification and validation

- HDL code generation

- equivalence test between model and HDL code

About the Presenters

Dipl.-Ing. Dimitri Hamidi is a senior application engineer at MathWorks focusing on FPGA design, signal & image processing as well as sensor fusion & tracking.

He studied electrical and information technology engineering at the Technical University of Munich.

Prior to Joining MathWorks he was as a research associate at the German Aerospace Center (DLR), worked for FEI as FPGA engineer and was algorithm engineer in Continental’s advanced engineering dept. –ADAS & AD.

Dr. Tjorben Groß works as a senior application engineer at the MathWorks, focusing on verification and validation methods in the context of functional safety. At MathWorks he has been supporting customers in a wide range of industries, focusing on making embedded software safe and secure. Before joining MathWorks, he was involved in different development projects at the Fraunhofer Institute for Industrial Mathematics in Kaiserslautern, e.g. on safety monitoring of power generator shafts.

Recorded: 22 Jan 2021

Welcome, everyone, and thank you for joining our webinar today. My name is Dmitri Hamidi. I'm from the application engineering, and one of my focus areas is [INAUDIBLE] generation and verification. And today, together with my colleague Tjorben Gros, we are going to present to you the topic of model-based for FPGA and ASIC in the context of functional safety.

Hello, everyone. My name is Tjroben Gros, and my focus area-- the verification and validation methods on [INAUDIBLE] models and SQL, which are required for compliance with functional safety standards such as ISO 26262. Today, I will cover the verification and validation part, and I look forward to our presentation.

So here's an overview of our agenda for today. I will start this presentation by answering the question of why use model-based design for FPGA and ASIC. And then we will move on to show you the workflow for safety critical systems.

It has two interweaving parts. The first part is the development workflow for HDL code and the second one is the continuous verification and validation. And finally, Tjorben will talk a bit about tool qualification from an ISO 26262 perspective.

So why using model-based design for FPGA and ASIC and why for safety critical systems? I want to share with you first what our customers say. Airbus develops safety critical avionics using model-based design, and what they say is that it enables them to increase product quality and decrease errors both in the requirement phase but also in the implementation phase. Philips Healthcare is another adopter of model-based design, and they say here that models are an effective language for communication and collaboration and that model-based design let them focus on their ideas instead of focusing on writing the HDL code.

Now before we move on with model-based design, I want to explain a bit the motivation behind it. The numbers that I'm going to present you today are based on the recent research group report. The first number is that around 2/3 of ASIC and FPGA projects are behind schedule and that over 50% of project time is spent on verification time. And in fact, more than 40% is [INAUDIBLE] debugging. Nonetheless, in 85% of the projects that went into production, there are bugs escaped. And finally, another interesting figure-- half of all functional flows originate from the specification.

In our view, the issue is the classical development workflow. It's still used widely in the industry. And to be more specific than that, it's the way different teams communicate and the disconnection in their workflows. Specifications are usually created in separate groups, and the communication is done by documents, scripts and meetings.

Now the problem is that there is no early way to validate the specification, which is based on this ad hoc sources of information. And as a results, errors are getting introduced into the specification. And those errors will probably propagate and remain undetected until the later stages in the process where fixing them becomes very costly.

The other aspect is the disconnection in the workflow. This specification will be handed over to software and hardware engineers. They translate it manually into design.

Manual coding is not the only time consuming, but it's also error-prone and will introduce bugs. This will overal increase the verification effort. And finally, by using this process, it's hard to adapt to changing regulatory requirements.

Model-based design addresses those issues, and the main idea is to focus on modeling. Models can increase communication efficiency because models are unambitious but also because they reflect the way engineers think. In fact, as a first step, multiple domains collaborate to create an executable specification of the system.

And by doing that, they'll be able to validate the requirements early. Hardware and software engineers will later help transform this ideal model into a detailed instrumentation model. And from this optimized model, production quality [INAUDIBLE] HDL code can be generated. This will not only save time for writing code but also for debugging.

Another key aspect is the continuous verification and validation because this will help detect errors at the right stage, and this will prevent them from propagating. Apart from that, the most verification effort will be done now on the model level, which is more effective. And finally, the same test [INAUDIBLE] that were used for model verification can be leveraged for code verification without the need to rewriting them.

Today, we are going to take a look at model-based design from the functional safety perspective. Although the reference workflow we present to you is valid for multiple standards, we are going to illustrate it using the ISO 26262 for automotive. And with that, I will hand it over to Tjorben to continue.

As mentioned, we will look today at ISO 26262, the functional safety standard for automotive. Let's start with an overview on those parts that are relevant for HDL code development. Part five, the product development at the hardware level references part 11 [INAUDIBLE].

As mentioned, we will look today at ISO 26262, the functional safety standard for automotive. Let's start with an overview on those parts that are relevant for HDL development. Part five-- the product development at the hardware level references part 11 for ASICs or FPGAs.

For model-based design development aspects, however, part 11-- design development aspects, however, part 11 references part 6 which is the product development at software level. So therefore, we will start with the development aspects of part six on the model level, and then we'll go to automatic HTML code generation while of course taking also into account requirements from part 11. We'll also dive into part eight, mostly class 11, where tool classification and qualification are discussed.

Part four, the product development at the system level, could partially be covered with model-based systems engineering, and we'll discuss that briefly in the beginning. But first, let's see how the ICC certification could streamline development in accordance with functional safety standards. We have packaged the artifacts that you can use for developing software with model-based design and compliance with the functional safety standards related to IEC 61508 in the IEC certification kit. This, of course, includes next to the ones for medical, railway, and agriculture vehicles, also ISO 26262 for automotive.

The IEC certification kit consists of two parts. The first part are reference workflows for model-based design, and we focus today on the one for HDL code generation along with systematic verification and validation methods for Simulink models and generates HDL code. The second part is all that material you need for tool classification and tool qualification, including test suites and vendor audits based on the reference workflow.

We will first go through the reference workflow with a focus on the HDL generation and verification methods and afterwards discuss the topic of tool qualification. Here you see the reference workflow from systems requirements to ASIC or FPGA implementation. Important steps here are requirements authoring, architecture development, modeling, HDL generation, and ASIC or FPGA implementation

We start on the left with the requirements and the architecture development. With Simulink requirements, you can author and link your requirements to your architecture created with System Composer. This establishes requirements traceability. So let us see how you can link your requirements to your system architecture using Requirements Toolbox and System Composer.

The System Composer allows you to create all the hardware and software components contained in your system. You can quickly draw a system architecture without having implementation information In the compute system, now you can see that the components are colored based on their stereotypes, which might be software, hardware, or system containing both. Requirements linking can be done in the requirements perspective.

Here, you can see with the batches which components are already linked to a requirement. Linking a new requirement to one component of your system can be done just by drag and drop from the list of your requirements on the bottom of the stream. If you want to remove the requirement batches around, this can be done freely so that it suits your design.

If you have added for example, the cost of the components, the overall system cost can easily be calculated using a few lines of MATLAB code and having the System Composer do the rest. For large systems, you can use predefined or custom views to focus only on the relevant parts of your system. For example, you can create a spotlight view showing upstream and downstream connections to your component. Or you can view all ASLA components or the [INAUDIBLE] stages for the components.

Since we are in the [INAUDIBLE] design environment, you can connect one component to a Simulink model and even perform simulation and testing on your whole system. Typically, requirements are stored in some external tools, like Word, Excel, [INAUDIBLE], or [INAUDIBLE]. You can use different methods to import requirements from these tools, for example, through the [INAUDIBLE] interface.

When imported, you can edit requirements and Simulink requirements and add new ones. All those requirements can be linked to architecture elements such as components or ports and model elements like blocks or state flow charts. You will be able to assess the implementation status and report back the links to the model elements via [INAUDIBLE] F.

In case of requirements changes in the external tools, you'll be notified so that you can review the changes and react accordingly. As seen before, you can easily set up and modify your architecture and link it to requirements. You can add stereotypes and properties like cost or [INAUDIBLE] to your components. And based on these and others, you can use MATLAB to run analysis, like a cost roll-up or calculation if you're using invalid or valid [INAUDIBLE] decompositions.

Also, you can create custom views to focus only on the relevant parts of a system-- for example, only on those parts of the system that a particular component is interacting with or on all the components with particular properties. The next step will be to implement the model according to your requirements with Simulink, state flow, fixed point designer, and other modeling tools. Of course, all Simulink and state flow elements can be linked to requirements like architecture components as well so that also in this phase, you can establish requirements traceability. Dimitri, could you please introduce modeling in the context of HDL projects?

Thank you, Tjorben. So let's continue with modeling. Systems involving digital designs are usually heterogeneous.

If you take a radar development project, for example, there are at least seven domains that need to collaborate efficiently in order to be successful. And Simulink enables them to do that because Simulink is a multi-domain simulation of modeling environment. You can run both time discrete features such as your [INAUDIBLE] design but also time continuous ones such as RF or analog components.

The obvious advantage is that the collaboration can start now earlier, and integration problems can be identified from the beginning. And from a digital design perspective, Simulink is simply a graphical way to describe hardware. In order to manage this large multi-domain modeling problems, Simulink projects provides you with the ability to organize your own models and scripts but also to reference the project of other teams.

You can connect it to version control with SVN or Git. And with that, you'll be able to do effective peer reviewing and the merging of code models. You can go a step further and do continuous integration with [INAUDIBLE] server, for example.

Apart from that, by using this dependency analysis future, you'll be able to find out which other components might be affected by your changes. And this will reduce the chances for errors. So in the sum, you'll be able to approach multi-domain modeling in a very structured way.

Simulink is also a flexible monitoring environment in the sense that you can leverage [INAUDIBLE] state flow to design your Simulink model. And therefore, you can choose the paradigm that is most suitable for the problem at hand. And also, you don't need to build everything from scratch.

Simulink provides domain-specific libraries, such as [INAUDIBLE] signal processing, communication, and vision. Finally, you can bring your handwritten code into Simulink, and this is done by a code simulation. In a couple of minutes, I'm going to explain to you how to optimize those models for targeting hardware. But for now, I will pass it to chairman, who will continue with model verification and validation.

We will be dividing the verification and validation methods into two stages-- first, the design verification on the Simulink model, and then the HDL code verification. The HDL code verification will be covered later by Dimitri. I will now start with the design verification on the model level where you want to make sure that the model is correctly implemented and ready for production code generation.

As soon as you start modeling, you want to make sure that you're modeling in accordance with safety standards like ISO 26262 or guidelines such as company internal design rules. These and more aspects can be addressed through review and static analysis on the model level. Modeling standards checking and designer detection are enabled by Simulink check, HDL coder, and Simulink design verifier. Let's look more closely at what Simulink check in combination with HDL coder can provide you.

Let us focus on two aspects. First, static model checks using the model advisor and assessing the model quality using the metrics dashboard-- I'm now starting the model advisor for the part of the system that will be deployed to the FPGA. We offer various checks for different categories.

So for example, you can run checks for ISO 26262 compliance and also for the HDL advisor. I'm now looking at the part that we will generate HDL code from. So I will run the HDL coder checks to make sure that my model is well-designed for HTML code generation. We can see that many of the checks passed zero through an error, and some finished with a warning, indicating that something should be fixed.

We have, for example, checks regarding naming conventions we must adhere to. Another warning that we see here is the check that verifies model settings to be correct for HDL code generation. We can see that a few settings are not correct and should be changed before finally generating the HDL code.

When developing software, we recommend monitoring the overall status and the quality of your project continuously. For this, we designed the metrics dashboard, which I have just opened for the whole controller containing the FPGA part and also the CPU part. This dashboard contains an overview of [INAUDIBLE] advisor attacks, including a grid view to easily spot warnings for components or particular checks, details about model size, like number of blocks, parameters, and interfaces. Also, model complexity and other metrics are calculated.

You have just seen the use of the model advisor for guideline checking to prepare the model for production HDL code generation. Checks in the model advisor can be customized, and new custom checks can be added if you, for example, need to adhere to company rules. Many checks from the model advisor can even check the model while you're editing, like a spellchecker.

To assess model quality, you can use the Metrics dashboard for analyzing complexity, reusability, size, and other metrics. In the demo, we did not show the formal verification methods on the model level provided by a Simulink design verifier. When you're implementing your requirements, you have the possibility to test. That's what we see in a moment.

But you also have the possibility to formally prove certain properties of your model. In addition to that, you can find design errors like division by zero or dead logic. So let's add also property proving in the reference workflow diagram.

After statically verifying and perhaps improving the model quality, you want to perform model testing and collect coverage. In case the coverage is not as high as required, test cases to increase the coverage can be automatically generated using the Simulink design verifier. So let's have a look at the tools.

I will show you how requirement-based testing can be done and how test cases can be automatically generated to increase coverage. Therefore, we start in the requirements editor and follow the link to the test case. Here, you can see that the model under test is a state machine that we have created a test harness for, which contains a set of stimuli and test criteria.

Clicking on the Run button starts this test that iterates over 11 different stimuli. Unfortunately, two of our tests did not pass. Let's investigate why this happened.

We can see that the test failed since the signal was not set to true within the expected 0.2 seconds after the fault but slightly later. By expanding the signal in the bottom, it is easy to trace the problem back to its source. After identifying the issue, it was fortunately straightforward to fix. And now we can see all 11 iterations pass.

While executing this test, also coverage was collected. We can see that we did not obtain full coverage. For example, only 87% decision coverage was measured.

These coverage results can be highlighted on the model where green elements indicate full coverage and red, missing coverage. Now we should add more tests to achieve full coverage. Sometimes you might face a situation where you are missing just a few percentage points to full coverage. In this case, you can use the automatic test generation from Simulink design verifier to add tests for completing the coverage.

The tests are generated in a new test case that we can now run and merge coverage results with the requirement-based tests. In this case, perhaps designers in our state machine made it impossible to achieve full coverage. Designer detection from Simulink design verifier could help to identify them. Let us recall what Simulink test offers you for testing your models. First, you can isolate the unit under test using a test harness and apply different test stimuli such as signals, temporal or logic-based.

As a framework, you can use simulation, equivalence, or baseline test. The test manager provides an environment for authoring, managing, and executing test cases manually or automated in a continuous integration system. Also, it provides various means of assessing the test results and generating reports. In addition, you can measure coverage with Simulink coverage.

Also, the automatic test case generation for increasing the coverage was shown in the demo. Dynamic testing and coverage analysis mark the last steps before coming to the HDL code generation and HDL code verification. Here you see an overview of all the methods that we have covered so far on the model level. Dmitri, could you please show us how the HDL code is generated and verified and validated?

As I mentioned in the beginning of this webinar, a Simulink model starts at a high level executable specification and will need to be refined by adding implementation details and optimizing the architecture. HDL coder can afterwards generate portable and synthesizable VHDL and [INAUDIBLE] code for FPGA and ASIC design. HDL code also supports mixed decision data types. Apart of course from fixed point, generation is supported for half, single, or double precision floating point.

In this slide, I want to illustrate how to optimize the model for targeting hardware. If you are starting with an idea of floating point model in the beginning, the first step is to optimize data types. For that, I will introduce later the fix pointed tool with which you can accelerate this process. From there, HDL code can be generated.

HDL code also provides an initial estimate for area and critical path. Having said that, the critical path estimation works only for FPGAs. [INAUDIBLE] assessment.

You can decide if your design targets can be met even before run the time-consuming implementation step. If it's not the case, you might, of course, choose to adopt the model manually. However, there are many options to optimize automatically.

For example, you can explore different hardware micro-architectures. Let me elaborate a bit on that. You can see on the right side of this slide the HDL options for a square root function.

In the hardware architecture, you can select among three different algorithms with some additional options. Another example is the HDL optimizer 50. There, you can choose between two hardware architectures.

The first one is optimized for low latency, and the second one is for area. Another example is the HDL optimized FIL filter. It allows you to select the proper filter structure in the options.

Sometimes it's necessary to replace a function with a lookup table, and the lookup table optimizer can generate interpolate look-up tables from Simulink blocks or MATLAB functions. And you can control the results by setting the precision of the approximation. So if the implementation meets your design goals, you are basically done.

But otherwise, you can start another iteration. In this way, you can explore your design space faster and optimize the model for targeting hardware. This is the fixed point tool I mentioned earlier. It can convert floating point to fixed point and optimize fixed point as well.

In order to give you a better idea on how it works, I will demonstrate it in a short demo. The first workflow that I want to show you is the fully automated one. For that, you need simulation test benches, but the tool can also consider your design ranges.

The user has to define their tolerance. This is the difference between the output signal before and after conversion. You can choose a vector of allowable word length and also some parameters for the optimization algorithm. Now let's start the optimization.

In this case, there was a valid solution that was found by the fixed point optimization. Here, you can compare the results. Those are the signals before and after conversion, and you can also see the tolerance regions that you defined.

The second workflow I want to show you is the semi-automatic one. I will choose the same setting as before. And now the tolerance only used to assess the pass-fail behavior.

If I click on the correct [INAUDIBLE] button, the simulation will run, and the tool will collect a data histogram for each plot in the new model. The histogram indicates how many times a hit was the most significant hit during simulation. There you can also check the calculated minimum and the maximum values but also your manual input.

Based on this information, the 6.2 will propose either the fraction length or the word length. I want to optimize the fraction length, and I will select a word length of 18. This column contains the proposed data types, and you can edit them manually.

For example, in case of this block, there is an underflow. You also have the possibility to easily check where is it [INAUDIBLE] in the model. And based on this information, you can adapt the word lengths or the fraction length accordingly. Then I will apply the new data types on the model, and I will run the simulation with the embedded types for the comparison with floating point. As seen before, you can visualize the signals, the tolerance, and also assess the pass-fail behavior.

Apart from optimizing data types, you can optimize timing using automated pipelining. The adaptive pipelining on the left will insert pipelines automatically. The distributed pipeline on the right will redistribute the available pipelines in your design or additionally redefine delay budget.

To optimize area, HDL coder uses resource sharing. The form multipliers in this example will be replaced by a single one by multiplexing [INAUDIBLE] oversampling. In the same manner, you can share, for example, identical subsystems or stream vector computations.

Clock-rate pipelining the further optimization approach-- the [INAUDIBLE] established between the model sample rate and the clock rate on the FPGA. Now this enables optimization, I mentioned before, even in feedback loops. And now I want to generate the [INAUDIBLE] code and show you how the code looks like.

This is an APSM model control application. You can implement it on an SSE. This part of the algorithm would be implemented on the processor, and the SGL algorithm would be implemented on the FPGA. The distance you can do model-based hardware [INAUDIBLE] design.

Now I want to generate code. I will use the HDL workflow by the [INAUDIBLE]. After completion you get HDL code generation report. If you go, for example, into the high level resource report, you will have an idea on how much resources are going to be instantiated in your design.

In our case, there are going to be certain multipliers. And you get this information for subsystem. Now let's check the critical path. It is estimated here.

And if you click on this link, it will show the critical path inside the generated model. So it starts with this register here and ends with this one. Now let's have a look at the code. The code is well-structure and even commented.

And the SQL names corresponds to the one in your model. But those are the components to your subsystems. If you click on this link, it will forward you to the subsystem that generated this code. And you can, of course, navigate back.

If you link your requirement to your model as [INAUDIBLE] told you before, those links for the requirements are generated automatically. So in this way, you can also verify that the code meets your design requirements. HDL coder can also help you integrating your IP into reference design. It can generate standalone IP codes with standard AXI interfaces.

But there's also an automated IP integration workflow, and you can set it up for your board and your reference design using the provided API. And there's a finite amount. We only support many popular [INAUDIBLE] for difference applications.

Throughout the previous verification and validation measures shown by [INAUDIBLE], we ensure that all requirements are implemented and that the implementation model behaves according to its specification. The next step is to demonstrate the equivalent between the model and the generated code. That is sometimes called translation validation.

As you can see on the right side of this reference workflow, the equivalence must be demonstrated with back to back simulation between the SJ code and the implementation model. Back to back simulation will also help with uncovering unintended functionality by enabling the code coverage analysis in the SJ simulator. On the other hand, if you are targeting FPGAs, FPGA in the loop will verify the implementation of your HDL code.

So let's go into details. In order to ensure that the RTL description behaves the same as your already verified implementation model, the first option will be so-called co-simulation. In fact, to make it more clear, I will run now a short demo. This is a test match fire controller.

I want to generate HDL code from the controller, and I want to verify this code using code simulation. And therefore, I will go now to the HDL workflow advisor, and I will set the test bench option. I will select the code simulation model, and I will ensure that the HDL code coverage is also enabled.

So this is the code simulation model that was generated automatically. The first step is to launch the HDL simulator. Then I will run the code simulation.

And while the code simulation is running, you can see the output from the HDL code from the HDL simulator and the output of the model. And here, you can verify that they are identical. If you go to this compared subsystem, you're going to see that there are assertions that are generated.

And this is very useful when you want to integrate this code simulation model into the Simulink test manager. This is the so-called code simulation loop. In this case, it was configured automatically for the generated code, but you can also use this [INAUDIBLE] for code simulating with your handwritten code.

And this way, you can bring also your manual written code into Simulink. And you can also verify it against the golden reference, for example. Now let's switch to the HDL simulator. You can see here the code coverage results.

And this information will help with the analysis for the presence of unintended functionality. A further option is to generate HDL test benches and run them in an [INAUDIBLE] simulator.

The SystemVerilog DPI-C test bench will create behavior models for the stimuli and the implementation model. The stimuli will be applied to the HDL code and the reference model simultaneously, and there's a check that verifies the equivalence. The HDL test bench, which is another option, is created by running Simulink simulation to capture the input vectors and the expected output data.

FPGA in the loop must be used for verifying the implementation of the HDL code on the FPGA. In this setting, Simulink is synchronized with the hardware [INAUDIBLE] and compares the output of the FPGA with the output of the Simulink model. This work very similar to what we have seen in the code simulation [INAUDIBLE]. Finally, the handwritten code must also be verified using the same approaches. And unfortunately, I won't be able to elaborate on this.

The last step in the reference workflow is verifying the FPGA or ASIC implementation as part of the target port or the issue. This must be done using hardware in the loop testing. Hardware in the loop can be performed using Speedgoat target computers provided by our partners.

The benefit is that the same test harnesses and model test that you created for simulation can now be reused to test the integrated system in real time. Along with the test cases, [INAUDIBLE] models can also be automatically deployed in Speedgoat either on the processor or on the built-in FPGA. During testing, the responses from the hardware [INAUDIBLE] will be collected and sent back to Simulink test manager for validation.

And now we believe the reference workflow is complete. We started the requirement [INAUDIBLE] and showed you how to create the system architecture. Then we talked about modeling and how to optimize models for implementation and code generation.

We also showed you the required verification and validation measures on the model level as well as on the code level. And finally, we arrived to the hardware integration testing using hardware in the loop. With that, I will once again hand it over to Jordan for our final topic for today.

The next and last section will be focused on tool classification and tool qualification, which is required for many tools being used in an ISO 26262 development workflow. This topic can be found in part eight of the standard. The IEC certification kit contains all important documents for mapping the ISO 26262 requirements onto model-based design tools.

It also contains information on tool classification and tool qualification, such as assessment reports, tool use cases, and workflow performance documents that can be adopted and used as a checklist. Also, it contains two validation test suites that can be executed to generate tool validation reports. So how is tool classification and tool qualification described in the ISO 26262 standard itself?

You first need to describe the tool use cases for each of the tools you're using to analyze the impact it could have if the tool has an error. If it has a very low impact on the safety of your project-- for example, if the development might crash-- then you have a tool confidence level one, TCL1, and no further qualification measures are required. If the error in the tool use case would have an impact, then we would have tool impact two, so TI2.

And as the next step, we would need to determine if we would be able to detect this error in another step that follows in our development workflow. If the probability is high to find the error, we have tool detection one, TD1, and need only low confidence level, which is, again, TCL1, and no further qualification measures are required. If there is a medium probability to find the error, we get to TCL2, and if there is no or low probability to find the error, we arrive at TCL3. For TLC2 and 3, we would need to apply according tool qualification measures such as tool validatiots report based on the tool validation test suites or other things.

Let's have a look how that is done in the tool. Here you see the certification artifacts explorer, where all the documents and test suites are included. For example, for each of the standards, you see the according document mapping the tools onto the required methods like the one for ISO 26262.

For each of the tools-- here, for example, for Simulink check, you see the certificate and the report. We now want to have a look at the tool qualification package because this includes the classification we saw in the previous slide. Here we can see the tool use cases, potential malfunctions and errors output, along with error prevention and detection measures.

In the tool classification summary, you see a table that lists the potential malfunctions, use cases, resulting tool impact, including justifications, prevention and detection measures with the resulting tool detection, along with the justification. On the right, you finally see the tool classification level-- here, for example, TCL2. In case we have TCL2, we need tool qualification measures, and depending on the [INAUDIBLE], one part of this might be to run the test suite to get a tool validation report.

I will now run the tests from the artifacts explorer, and after a while, the report containing all the results is shown. For each result, we see information about the Simulink model that was checked and the expected and actual results. In section eight, clause 11 of ISO 26262 standard, you find the possible methods to qualify the tools.

The tool validation report based on the test that we just saw would contribute to method 1C, the validation of the software tool. With the IEC certification kit, you can also apply 1B, the evaluation of the tool development process, which was performed by the [INAUDIBLE], and the evidence is provided in form of certificates and assessment reports. A summary from the HDL coder certificate report is shown here together with a certificate. Next to the suitability of the HDL coder for all [INAUDIBLE], it shows that if the reference workflow is used, PCL1 can be achieved.

So how is the qualification work then divided between MathWorks and you, the user? ISO 26262 is asking the tool user to do the final tool qualification since only the tool user knows how the workflow looks like and can document it accordingly. However, we, the MathWorks, already performed a pre-classification and prequalification work for the generic tool use cases based on the reference workflow that we discussed earlier in today's webinar, as the [INAUDIBLE] provided us an independent assessment of the reference workflow and the prequalification artifacts.

This is already the end of our webinar. We've been showing you the reference workflow that covers many development verification and validation activities required by safety critical standards. But it furthermore enables collaboration and shortens development time, and the reduction and verification effort is also an important aspect of this workflow.

And the ultimate goal is to enable you to streamline functional safety standards compliance in your embedded project. In case you're planning already your next development project in the context of functional safety, please reach out to us, and we will be happy to discuss the topics. In addition to that, we can support it with our training and with our consulting.