resubLoss

Resubstitution classification loss for discriminant analysis classifier

Description

L = resubLoss(Mdl)L by resubstitution for the trained discriminant analysis classifier

Mdl using the training data stored in Mdl.X and the

corresponding true class labels stored in Mdl.Y. By default,

resubLoss uses the loss, meaning the loss computed for the data used

by fitcdiscr to create

Mdl.

L = resubLoss(___,LossFun=lossf)

The classification loss (L) is a resubstitution quality measure, and

is returned as a numeric scalar. Its interpretation depends on the loss function

(lossf), but in general, better classifiers yield smaller

classification loss values.

Examples

Compute the resubstituted classification error for the Fisher iris data.

Create a classification model for the Fisher iris data.

load fisheriris

mdl = fitcdiscr(meas,species);Compute the resubstituted classification error.

L = resubLoss(mdl)

L =

0.0200Input Arguments

Discriminant analysis classifier, specified as a ClassificationDiscriminant model object trained with fitcdiscr.

Loss function, specified as a built-in loss function name or a function handle.

The following table describes the values for the built-in loss functions. Specify one using the corresponding character vector or string scalar.

| Value | Description |

|---|---|

"binodeviance" | Binomial deviance |

"classifcost" | Observed misclassification cost |

"classiferror" | Misclassified rate in decimal |

"exponential" | Exponential loss |

"hinge" | Hinge loss |

"logit" | Logistic loss |

"mincost" | Minimal expected misclassification cost (for classification scores that are posterior probabilities) |

"quadratic" | Quadratic loss |

"mincost" is appropriate for classification scores

that are posterior probabilities. Discriminant analysis classifiers return posterior

probabilities as classification scores by default (see predict).

Specify your own function using function handle notation. Suppose that

n is the number of observations in X, and

K is the number of distinct classes

(numel(Mdl.ClassNames)). Your function must have the signature

lossvalue = lossfun(C,S,W,Cost)The output argument

lossvalueis a scalar.You specify the function name (

lossfun).Cis an n-by-K logical matrix with rows indicating the class to which the corresponding observation belongs. The column order corresponds to the class order inMdl.ClassNames.Create

Cby settingC(p,q) = 1, if observationpis in classq, for each row. Set all other elements of rowpto0.Sis an n-by-K numeric matrix of classification scores. The column order corresponds to the class order inMdl.ClassNames.Sis a matrix of classification scores, similar to the output ofpredict.Wis an n-by-1 numeric vector of observation weights. If you passW, the software normalizes the weights to sum to1.Costis a K-by-K numeric matrix of misclassification costs. For example,Cost = ones(K) - eye(K)specifies a cost of0for correct classification and1for misclassification.

Example: LossFun="binodeviance"

Example: LossFun=@lossf

Data Types: char | string | function_handle

More About

Classification loss functions measure the predictive inaccuracy of classification models. When you compare the same type of loss among many models, a lower loss indicates a better predictive model.

Consider the following scenario.

L is the weighted average classification loss.

n is the sample size.

For binary classification:

yj is the observed class label. The software codes it as –1 or 1, indicating the negative or positive class (or the first or second class in the

ClassNamesproperty), respectively.f(Xj) is the positive-class classification score for observation (row) j of the predictor data X.

mj = yjf(Xj) is the classification score for classifying observation j into the class corresponding to yj. Positive values of mj indicate correct classification and do not contribute much to the average loss. Negative values of mj indicate incorrect classification and contribute significantly to the average loss.

For algorithms that support multiclass classification (that is, K ≥ 3):

yj* is a vector of K – 1 zeros, with 1 in the position corresponding to the true, observed class yj. For example, if the true class of the second observation is the third class and K = 4, then y2* = [

0 0 1 0]′. The order of the classes corresponds to the order in theClassNamesproperty of the input model.f(Xj) is the length K vector of class scores for observation j of the predictor data X. The order of the scores corresponds to the order of the classes in the

ClassNamesproperty of the input model.mj = yj*′f(Xj). Therefore, mj is the scalar classification score that the model predicts for the true, observed class.

The weight for observation j is wj. The software normalizes the observation weights so that they sum to the corresponding prior class probability stored in the

Priorproperty. Therefore,

Given this scenario, the following table describes the supported loss functions that you can specify by using the LossFun name-value argument.

| Loss Function | Value of LossFun | Equation |

|---|---|---|

| Binomial deviance | "binodeviance" | |

| Observed misclassification cost | "classifcost" | where is the class label corresponding to the class with the maximal score, and is the user-specified cost of classifying an observation into class when its true class is yj. |

| Misclassified rate in decimal | "classiferror" | where I{·} is the indicator function. |

| Cross-entropy loss | "crossentropy" |

The weighted cross-entropy loss is where the weights are normalized to sum to n instead of 1. |

| Exponential loss | "exponential" | |

| Hinge loss | "hinge" | |

| Logistic loss | "logit" | |

| Minimal expected misclassification cost | "mincost" |

The software computes the weighted minimal expected classification cost using this procedure for observations j = 1,...,n.

The weighted average of the minimal expected misclassification cost loss is |

| Quadratic loss | "quadratic" |

If you use the default cost matrix (whose element value is 0 for correct classification

and 1 for incorrect classification), then the loss values for

"classifcost", "classiferror", and

"mincost" are identical. For a model with a nondefault cost matrix,

the "classifcost" loss is equivalent to the "mincost"

loss most of the time. These losses can be different if prediction into the class with

maximal posterior probability is different from prediction into the class with minimal

expected cost. Note that "mincost" is appropriate only if classification

scores are posterior probabilities.

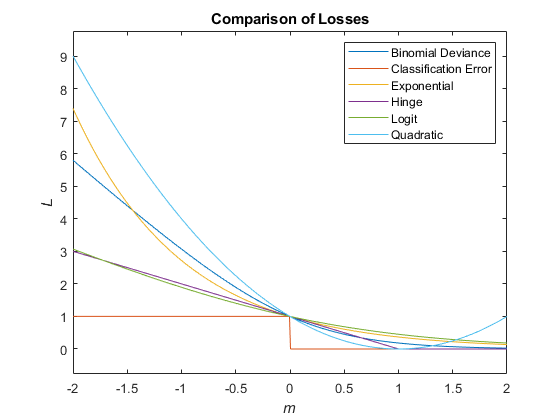

This figure compares the loss functions (except "classifcost",

"crossentropy", and "mincost") over the score

m for one observation. Some functions are normalized to pass through

the point (0,1).

The posterior probability that a point x belongs to class k is the product of the prior probability and the multivariate normal density. The density function of the multivariate normal with 1-by-d mean μk and d-by-d covariance Σk at a 1-by-d point x is

where is the determinant of Σk, and is the inverse matrix.

Let P(k) represent the prior probability of class k. Then the posterior probability that an observation x is of class k is

where P(x) is a normalization constant, the sum over k of P(x|k)P(k).

The prior probability is one of three choices:

'uniform'— The prior probability of classkis one over the total number of classes.'empirical'— The prior probability of classkis the number of training samples of classkdivided by the total number of training samples.Custom — The prior probability of class

kis thekth element of thepriorvector. Seefitcdiscr.

After creating a classification model (Mdl)

you can set the prior using dot notation:

Mdl.Prior = v;

where v is a vector of positive elements

representing the frequency with which each element occurs. You do

not need to retrain the classifier when you set a new prior.

The matrix of expected costs per observation is defined in Cost.

Version History

Introduced in R2011bStarting in R2023b, the following classification model object functions use observations with missing predictor values as part of resubstitution ("resub") and cross-validation ("kfold") computations for classification edges, losses, margins, and predictions.

In previous releases, the software omitted observations with missing predictor values from the resubstitution and cross-validation computations.

See Also

Classes

Functions

MATLAB Command

You clicked a link that corresponds to this MATLAB command:

Run the command by entering it in the MATLAB Command Window. Web browsers do not support MATLAB commands.

웹사이트 선택

번역된 콘텐츠를 보고 지역별 이벤트와 혜택을 살펴보려면 웹사이트를 선택하십시오. 현재 계신 지역에 따라 다음 웹사이트를 권장합니다:

또한 다음 목록에서 웹사이트를 선택하실 수도 있습니다.

사이트 성능 최적화 방법

최고의 사이트 성능을 위해 중국 사이트(중국어 또는 영어)를 선택하십시오. 현재 계신 지역에서는 다른 국가의 MathWorks 사이트 방문이 최적화되지 않았습니다.

미주

- América Latina (Español)

- Canada (English)

- United States (English)

유럽

- Belgium (English)

- Denmark (English)

- Deutschland (Deutsch)

- España (Español)

- Finland (English)

- France (Français)

- Ireland (English)

- Italia (Italiano)

- Luxembourg (English)

- Netherlands (English)

- Norway (English)

- Österreich (Deutsch)

- Portugal (English)

- Sweden (English)

- Switzerland

- United Kingdom (English)