양자화

신경망 파라미터를 낮은 정밀도의 데이터형으로 양자화, 고정소수점 코드 생성을 위해 딥러닝 신경망 준비

계층의 가중치, 편향 및 활성화를 정수 데이터형으로 스케일링한 낮은 정밀도로 양자화합니다. 그런 다음 양자화된 이러한 신경망에서 GPU, FPGA 또는 CPU 배포용 C/C++, CUDA® 또는 HDL 코드를 생성할 수 있습니다.

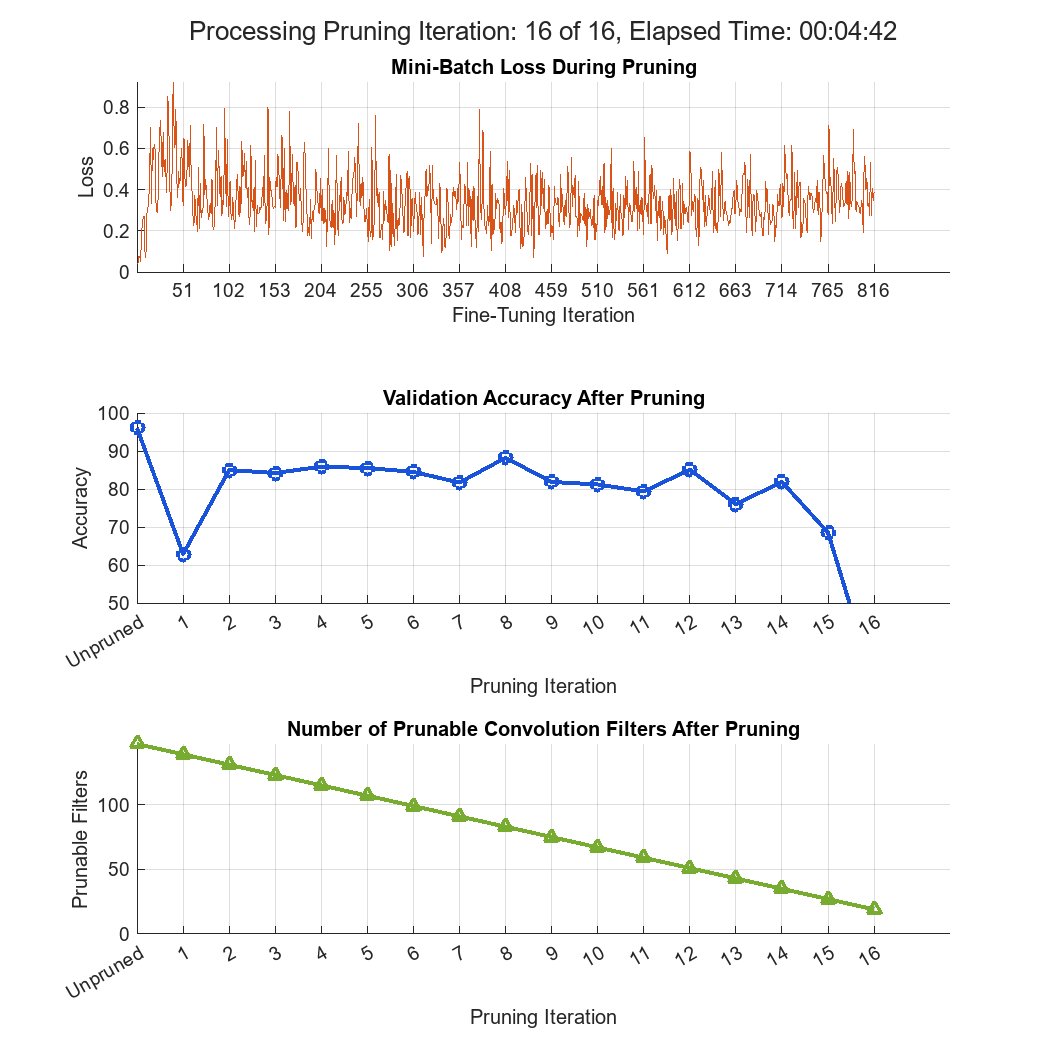

Deep Learning Toolbox™ Model Compression Library에서 사용 가능한 압축 기법에 대한 자세한 개요는 Reduce Memory Footprint of Deep Neural Networks 항목을 참조하십시오.

함수

dlquantizer | Quantize a deep neural network to 8-bit scaled integer data types |

dlquantizationOptions | Options for quantizing a trained deep neural network |

prepareNetwork | Prepare deep neural network for quantization (R2024b 이후) |

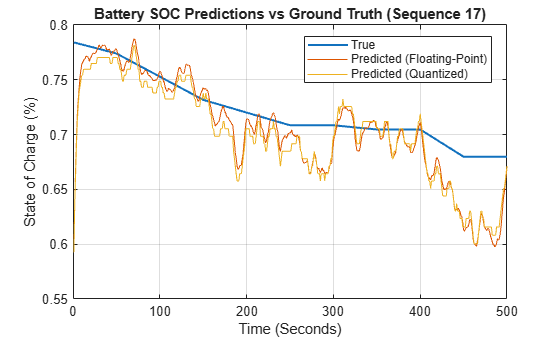

calibrate | Simulate and collect ranges of a deep neural network |

quantize | Quantize deep neural network (R2022a 이후) |

validate | Quantize and validate a deep neural network |

quantizationDetails | 신경망의 양자화 세부 정보 표시 (R2022a 이후) |

estimateNetworkMetrics | Estimate network metrics for specific layers of a neural network (R2022a 이후) |

equalizeLayers | Equalize layer parameters of deep neural network (R2022b 이후) |

exportNetworkToSimulink | Generate Simulink model that contains deep learning layer blocks and subsystems that correspond to deep learning layer objects (R2024b 이후) |

앱

| 심층 신경망 양자화기 | Quantize deep neural network to 8-bit scaled integer data types |

도움말 항목

양자화 이해하기

- Quantization of Deep Neural Networks

Learn about deep learning quantization tools and workflows. - Data Types and Scaling for Quantization of Deep Neural Networks

Understand effects of quantization and how to visualize dynamic ranges of network convolution layers.

배포 전 워크플로

- Prepare Data for Quantizing Networks

Learn about supported data formats for quantization workflows. - Quantize Multiple-Input Network Using Image and Feature Data

Quantize a network with multiple inputs. - Export Quantized Networks to Simulink and Generate Code

Export a quantized neural network to Simulink and generate code from the exported model. - Quantization-Aware Training with Pseudo-Quantization Noise

Perform quantization-aware training with pseudo-quantization noise on the MobileNet-V2 network. (R2026a 이후)

배포

- Quantize Semantic Segmentation Network and Generate CUDA Code

Quantize a convolutional neural network trained for semantic segmentation and generate CUDA code. - Classify Images on FPGA by Using Quantized GoogLeNet Network (Deep Learning HDL Toolbox)

This example shows how to use the Deep Learning HDL Toolbox™ to deploy a quantized GoogleNet network to classify an image. - Compress Image Classification Network for Deployment to Resource-Constrained Embedded Devices

Reduce the memory footprint and computation requirements of an image classification network for deployment to resource-constrained embedded devices such as the Raspberry Pi®.

고려 사항

- Quantization Workflow System Requirements

See what products are required for the quantization of deep neural networks. - Supported Layers for Quantization

Learn which deep neural network layers are supported for quantization.