audioDelta

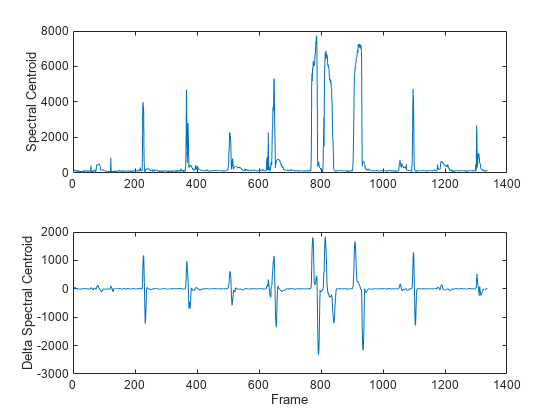

Compute delta features

Syntax

Description

delta = audioDelta(x,deltaWindowLength)

delta = audioDelta(x,deltaWindowLength,initialCondition)

[

also returns the final condition of the filter.delta,finalCondition] = audioDelta(x,___)

Examples

Input Arguments

Output Arguments

More About

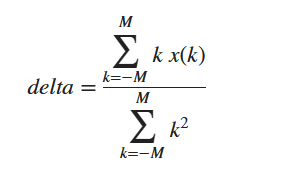

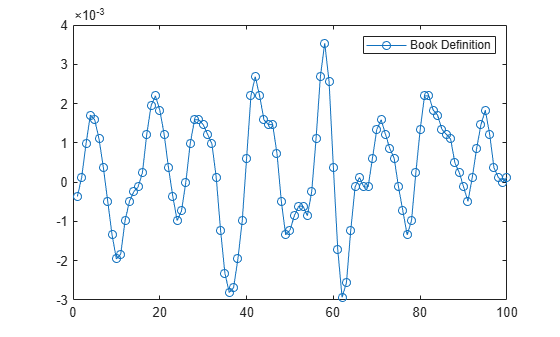

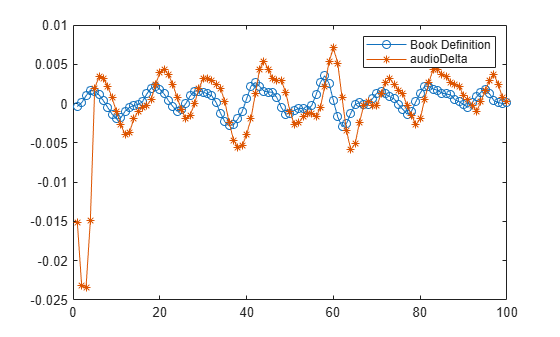

Algorithms

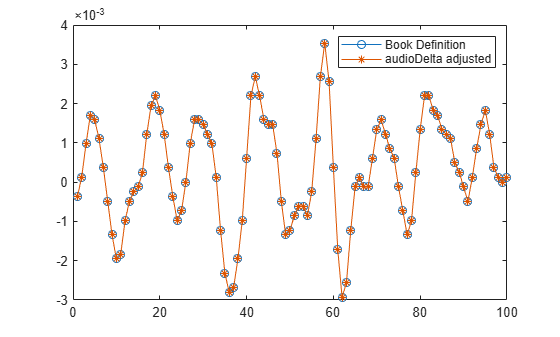

To support causal streaming, audioDelta introduces a reporting delay

of the least-squares approximation equal to

floor(. To learn how to align

the function with the classic definition found in [1], see Compare audioDelta with Book Definition.deltaWindowLength/2)

References

[1] Rabiner, Lawrence R., and Ronald W. Schafer. Theory and Applications of Digital Speech Processing. Upper Saddle River, NJ: Pearson, 2010.

Extended Capabilities

Version History

Introduced in R2020b