Ship Detection from Sentinel-1 C Band SAR Data Using YOLOX Object Detection

This example shows how to detect ships from Sentinel-1 C Band SAR Data using YOLOX object detection.

Synthetic aperture radar (SAR) remote sensing is an important method for marine monitoring due to its all-day, all-weather capability. Ship detection in SAR images plays a critical role in shipwreck rescue, fishery and traffic management, and other marine applications. SAR imagery provides data with high spatial and temporal resolution, which is useful for ship detection.

This example shows how to use a pretrained YOLOX object detection network to perform these tasks.

Detect ships in sample test image.

Detect ships in large-scale test image with block processing.

Plot the large-scale test image on the map and show the ships detected in the large-scale test image on the map.

This example also shows how you can train the YOLOX object detector from scratch and evaluate the detector on test data.

Detect Ships Using Pretrained Network

Load Pretrained Network

Create a folder in which to store the pretrained YOLOX object detection network and test images. You can use the pretrained network and test images to run the example without waiting for training to complete.

Download the pretrained network and load it into the workspace by using the helperDownloadObjectDetector helper function. The helper function is attached to this example as a supporting file.

dataDir = "shipDetection";

detector = helperDownloadObjectDetector(dataDir);Detect Ships in Test Image Using Pretrained Network

Read a sample test image and detect the ships it contains using the pretrained object detection network. The size of the test image is and must be the same as the size of the input to the object detection network. Use a threshold of 0.55 to reduce the false positives.

testImg = fullfile(dataDir,"SARShipDetectionYoloX","test1.jpg"); Img = imread(testImg); [bboxes,scores,labels] = detect(detector,Img,Threshold=0.55);

Display the test image and the output with bounding boxes for the detected ships as a montage.

detectedIm = insertObjectAnnotation(Img,"Rectangle",bboxes,scores,LineWidth=2,Color="red"); figure montage({Img,detectedIm},BorderSize=8) title("Detected Ships in Test Image")

Detect Ships in Large-Scale Test Image Using Pretrained Network

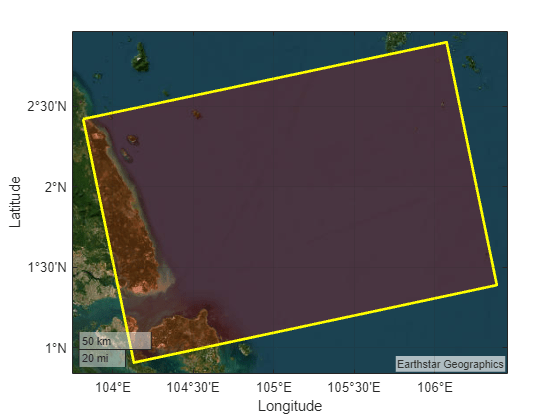

Create a large-scale test image of the Singapore Strait region. Get the world x-y coordinates representing the geographic extent of the region using mappolyshape (Mapping Toolbox) object.

lat = [2.420848 2.897566 1.389878 0.908674 2.420848]; lon = [103.816467 106.073883 106.386215 104.131699 103.816467]; p = projcrs(32648); [xb,yb] = projfwd(p,lat,lon); dataRegion = mappolyshape(xb,yb); dataRegion.ProjectedCRS = p;

Plot the region of interest using satellite imagery.

figure geoplot(dataRegion, ... LineWidth=2, ... EdgeColor="yellow", ... FaceColor="red", ... FaceAlpha=0.2) geobasemap satellite

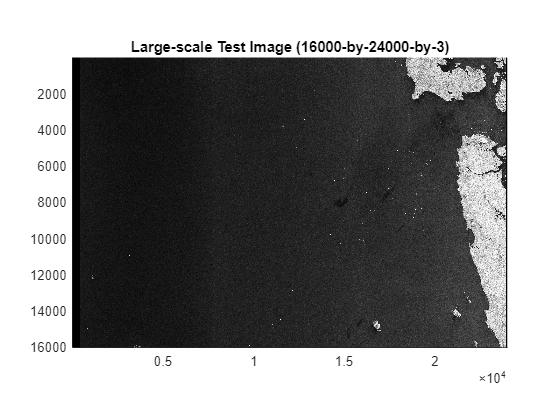

To perform ship detection on the large-scale image, use the blockedImage (Image Processing Toolbox) object. You can use this object to load a large-scale image and process it on systems with limited resources.

Create the blocked image. Display the image using the bigimageshow (Image Processing Toolbox) function.

largeImg = fullfile(dataDir,"SARShipDetectionYoloX","test-ls.jpg"); bim = blockedImage(largeImg); figure bigimageshow(bim) title("Large-scale Test Image (16000-by-24000-by-3)")

Set the block size to the input size of the detector.

blockSize = [640 640 3];

Create a function handle to the detectShipsLargeImage helper function. The helper function, which contains the ship detection algorithm definition, is attached to this example as a supporting file.

detectionFcn = @(bstruct) detectShipsLargeImage(bstruct,detector);

Produce a processed blocked image bimProc with annotations for the bounding boxes by using the apply (Image Processing Toolbox) object function with the detectionFcn function handle. This function call also saves the bounding boxes in the getBboxes MAT file.

bimProc = apply(bim,detectionFcn,BlockSize=blockSize);

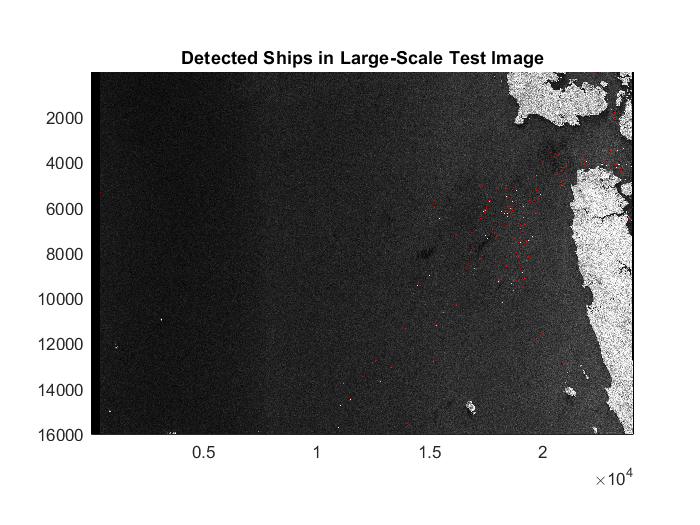

Display the output with bounding boxes containing the detected ships.

figure bigimageshow(bimProc) title("Detected Ships in Large-Scale Test Image") uicontrol(Visible="off")

A bounding box indicates the region of interest (ROI) of the detected ship. Get the world coordinates of the detected ship ROIs from the bounding boxes using the getBboxesWorldCoords helper function. The getBboxesWorldCoords helper function is attached to this example as a supporting file. Because the image metadata is not available, the helper function manually creates the spatial referencing object by setting image attributes such as pixel spacing and affine rotation.

Store the x- and y- world coordinates of the ship bounding boxes in the polyX and polyY cell arrays, respectively.

[polyX,polyY] = getBboxesWorldCoords(xb,yb,p);

Create a mappolyshape (Mapping Toolbox) object using the polyX and polyY cell arrays containing the world coordinates for ship bounding boxes.

shape = mappolyshape(polyX, polyY); shape.ProjectedCRS = p;

Visualize the ship ROIs in a geographic axes with the data region.

figure geoplot(dataRegion, ... LineWidth=2, ... EdgeColor="yellow", ... FaceColor="red", ... FaceAlpha=0.2) hold on geoplot(shape, ... EdgeColor="blue", ... FaceColor="cyan", ... FaceAlpha=0.2) geobasemap satellite

Train and Evaluate YOLOX Object Detection Network for Ship Detection

This part of the example shows how to train and evaluate a YOLOX object detection network from scratch.

Load Data

This example uses the Large-Scale SAR Ship Detection Dataset-v1.0 [1], which was built on the Sentinel-1 satellite. The large-scale images are collected from 15 original large-scene space-borne SAR images. The polarization modes include VV and VH, the imaging mode is interferometic wide (IW) and the data set has the characteristics of large-scale ocean observation, small ship detection, abundant pure backgrounds, fully automatic detection flow, and numerous standardized research baselines. This data set contains 15 large-scale SAR images whose ground truths are correctly labeled. The size of the large-scale images is unified to 24000-by-16000 pixels. The format is a three-channel, 24-bit, grayscale, JPG. The annotation file is an XML file that records the target location information, which comprises Xmin, Xmax, Ymin, and Ymax. To facilitate network training, the large-scale images are directly cut into 9000 subimages with a size of 800-by-800 pixels each.

To download the Large-Scale SAR Ship Detection Dataset-v1.0, go to this website of the Large-Scale SAR Ship Detection Dataset-v1.0 and click the Download links for the JPEGImages_sub_train, JPEGImages_sub_test, and Annotations_sub RAR files, located at the bottom of the webpage. You must provide an email address or register on the website to download the data. Extract the contents of JPEGImages_sub_train, JPEGImages_sub_test, and Annotations_sub to folders with the names JPEGImages_sub_train, JPEGImages_sub_test, and Annotations_sub, respectively, in the current working folder.

The data set uses 6000 images (600 subimages each from the first 10 large-scale images) as the training data and the remaining 3000 images (600 subimages each from the remaining 5 large-scale images) as the test data. In this example, you use 6600 images (600 subimages each from the first 11 large-scale images) as the training data and the remaining 2400 images (600 subimages each from the remaining 4 large-scale images) as the test data. Hence, move the 600 image files with names 11_1_1 to 11_20_30 from the JPEGImages_sub_test folder to the JPEGImages_sub_train folder. Move the annotation files with names 01_1_1 to 11_20_30, corresponding to the training data, to a folder with the name Annotations_train in the current working folder, and the annotation files with names 12_1_1 to 15_20_30, corresponding to the test data, to a folder named Annotations_test in the current working folder.

Prepare Data for Training

To train and test the network only on images that contain ships, use the createDataTable helper function to perform these steps.

Discard images that do not have any ships from the data set. This operation leaves 1350 images in the training set and 509 images in the test set.

Organize the training and test data into two separate tables, each with two columns, where the first column contains the image file paths and the second column contains the ship bounding boxes.

The createDataTable helper function is attached to this example as a supporting file.

trainingSet = fullfile(pwd,"JPEGImages_sub_train"); annotationsTrain = fullfile(pwd,"Annotations_train"); trainingDataTbl = createDataTable(trainingSet,annotationsTrain); testSet = fullfile(pwd,"JPEGImages_sub_test"); annotationsTest = fullfile(pwd,"Annotations_test"); testDataTbl = createDataTable(testSet, annotationsTest);

Display the first few rows of the training data table.

trainingDataTbl(1:5,:)

ans=5×2 table

imageFileName ship

________________________________________________________ ________________

"C:\Dev\shipDetection\JPEGImages_sub_train/01_10_12.jpg" {2×4 double }

"C:\Dev\shipDetection\JPEGImages_sub_train/01_10_17.jpg" {[95 176 12 11]}

"C:\Dev\shipDetection\JPEGImages_sub_train/01_10_20.jpg" {4×4 double }

"C:\Dev\shipDetection\JPEGImages_sub_train/01_10_21.jpg" {4×4 double }

"C:\Dev\shipDetection\JPEGImages_sub_train/01_10_22.jpg" {2×4 double }

Create datastores for loading the image and label data during training and evaluation by using the imageDatastore and boxLabelDatastore (Computer Vision Toolbox) objects.

imdsTrain = imageDatastore(trainingDataTbl.imageFileName); bldsTrain = boxLabelDatastore(trainingDataTbl(:,"ship")); imdsTest = imageDatastore(testDataTbl.imageFileName); bldsTest = boxLabelDatastore(testDataTbl(:,"ship"));

Combine the image and box label datastores.

trainingData = combine(imdsTrain,bldsTrain); testData = combine(imdsTest,bldsTest);

Display one of the training images and box labels.

data = read(trainingData);

Img = data{1};

bbox = data{2};

annotatedImage = insertShape(Img,"Rectangle",bbox);

figure

imshow(annotatedImage)

title("Sample Annotated Training Image")

Augment Training Data

Augment the training data by randomly flipping the image and associated box labels horizontally using the transform function with the augmentData helper function. The augmentData helper function is attached to this example as a supporting file. Augmentation increases the variability of the training data without increasing the number of labeled training samples. To ensure unbiased evaluation, do not apply data augmentation to the test data.

augmentedTrainingData = transform(trainingData,@augmentData);

Read the same image multiple times and display the augmented training data.

augmentedData = cell(4,1); for k = 1:4 data = read(augmentedTrainingData); augmentedData{k} = insertShape(data{1},"rectangle",data{2}); reset(augmentedTrainingData); end figure montage(augmentedData,BorderSize=8) title("Sample Augmented Training Data")

Define Network Architecture

Specify the object classes.

classes = ["ship"];Select the "small-coco" pretrained network as the base network. The "small-coco" network is a pretrained YOLOX deep learning network created using CSP-DarkNet-53 as the base network and trained on the COCO data set.

networkName = "small-coco";Create a YOLOX object detection network.

pretrainedDetector = yoloxObjectDetector(networkName, classes);

Display the network.

disp(pretrainedDetector)

yoloxObjectDetector with properties:

ClassNames: {'ship'}

InputSize: [640 640 3]

NormalizationStatistics: [1×1 struct]

ModelName: 'small-coco'

Specify Training Options

Train the object detection network using the Adam optimization solver. Specify the hyperparameter settings using the trainingOptions function. Specify the mini-batch size as 8 and the learning rate as 0.001 over the span of training. Use the test data as the validation data. You can experiment with tuning the hyperparameters based on your available processing memory.

options = trainingOptions("adam", ... GradientDecayFactor=0.9, ... SquaredGradientDecayFactor=0.999, ... InitialLearnRate=0.001, ... LearnRateSchedule="none", ... MiniBatchSize=8, ... L2Regularization=0.0005, ... MaxEpochs=10, ... BatchNormalizationStatistics="moving", ... DispatchInBackground=false, ... ResetInputNormalization=false, ... Shuffle="every-epoch", ... VerboseFrequency=20, ... CheckpointPath=tempdir, ... ValidationData=testData);

Train Network

To train the network, set the doTraining variable to true. By default, the trainYOLOXObjectDetector function uses a GPU if one is available. Training on a GPU requires a Parallel Computing Toolbox™ license and a supported GPU device. For information on supported devices, see GPU Computing Requirements (Parallel Computing Toolbox). Otherwise, the trainYOLOXObjectDetector function uses the CPU. To specify the execution environment, use the ExecutionEnvironment training option.

Training takes a few hours on an NVIDIA™ Titan Xp GPU with 12 GB memory. If you have a GPU with less memory, lower the mini-batch size using the trainingOptions function to prevent running out of memory.

doTraining = false; if doTraining disp("Training YOLOX model ...") [detector,info] = trainYOLOXObjectDetector(augmentedTrainingData,pretrainedDetector,options); save("trainedYOLOX_ship_detector.mat","detector"); end

Evaluate Detector Using Test Data

Evaluate the trained object detector on the test data using the average precision metric. The average precision provides a single number that incorporates the ability of the detector to make correct classifications (precision) and the ability of the detector to find all relevant objects (recall).

Use the detector to detect ships in all images in the test data.

detectionResults = detect(detector,testData,MiniBatchSize=4);

Evaluate the object detector using the average precision metric.

metrics = evaluateObjectDetection(detectionResults,testData);

classID = 1;

precision = metrics.ClassMetrics.Precision{classID};

recall = metrics.ClassMetrics.Recall{classID};The precision/recall (PR) curve highlights how precise a detector is at varying levels of recall. The ideal precision is 1 at all recall levels. Using more data can improve the average precision but often requires more training time.

Plot the PR curve.

figure plot(recall,precision) xlabel("Recall") ylabel("Precision") grid on title(sprintf("Average Precision = %.2f",metrics.ClassMetrics.mAP(classID)))

References

[1] Tianwen Zhang, Xiaoling Zhang, Xiao Ke, Xu Zhan, Jun Shi, Shunjun Wei, Dece Pan, et al. “LS-SSDD-v1.0: A Deep Learning Dataset Dedicated to Small Ship Detection from Large-Scale Sentinel-1 SAR Images.” Remote Sensing 12, no. 18 (September 15, 2020): 2997. https://doi.org/10.3390/rs12182997.

See Also

imagePretrainedNetwork | blockedImage (Image Processing Toolbox) | apply (Image Processing Toolbox) | yoloxObjectDetector (Computer Vision Toolbox) | evaluateObjectDetection (Computer Vision Toolbox)

Related Topics

- Map Flood Areas Using Sentinel-1 SAR Imagery (Image Processing Toolbox)