Reconstruct Complete 3-D Scene Using Incremental Structure from Motion

After initialization with two views, the remaining views are processed to incrementally build the 3-D structure of the scene. The process of selecting the next best view is critical because each choice influences the stability and accuracy of all subsequent steps in the reconstruction.

The candidates for the next best view are the unprocessed images that contain a sufficient number of triangulated 3-D points. The optimal choice reduces camera pose uncertainty and contributes well-conditioned points to the structure.

Once the next view is selected, it is processed as follows:

Pose Estimation: Estimate the pose of the next view by solving the Perspective-n-Point (PnP) problem using 2-D feature correspondences to known 3-D points.

Triangulation: Triangulate new 3-D points using the

pointTrackcontaining the next view.Bundle Adjustment: Perform bundle adjustment to jointly refine the pose of the next view, the poses of views that share sufficient tracks with it, and all 3-D points observed by these views.

Re-Triangulation: Triangulate additional 3-D points with refined camera poses after each bundle adjustment. The improved poses increase triangulation accuracy and allow additional tracks to meet quality criteria.

Load Initial Reconstruction Data

First, load initial reconstruction created from the Reconstruct 3-D Scene from Geometrically Refined Pair of Initial Views example.

% Check if a view graph exists in workspace. If not, load it from MAT file. downloadFolder = tempdir; if ~exist("viewGraph", "var") reconstructFile = fullfile(downloadFolder, "sfmTrainingDataTUMRGBD", "initialReconstruction.mat"); reconstructData = load(reconstructFile); viewGraph = reconstructData.viewGraph; wpSet = reconstructData.wpSet; end

Load camera intrinsic parameters and the ground truth of camera poses.

% Load the camera intrinsics and ground truth poses intrinsicsFile = fullfile(downloadFolder, "sfmTrainingDataTUMRGBD", "cameraInfo.mat"); data2 = load(intrinsicsFile); intrinsics = data2.intrinsics; gTruth = data2.cameraPoses;

Ensure that the images are available on the path.

% Check if the image datastore exists. If not, load images. if ~exist("imds", "var") imageFolder = fullfile(downloadFolder, "sfmTrainingDataTUMRGBD", "images"); imds = imageDatastore(imageFolder); end

Retrieve the pixel color for each 2-D feature point and store it to enable displaying a colored 3-D reconstruction.

point2dColors = cell(1, viewGraph.NumViews); for viewId = 1:viewGraph.NumViews I = readimage(imds, viewId); currView = findView(viewGraph, viewId); imagePoints = currView.Points{:}.Location; idx = sub2ind(size(I,[1, 2]), round(imagePoints(:, 2)), round(imagePoints(:, 1))); R = I(:,:,1); G = I(:,:,2); B = I(:,:,3); point2dColors{viewId}= [R(idx) G(idx) B(idx)]; end

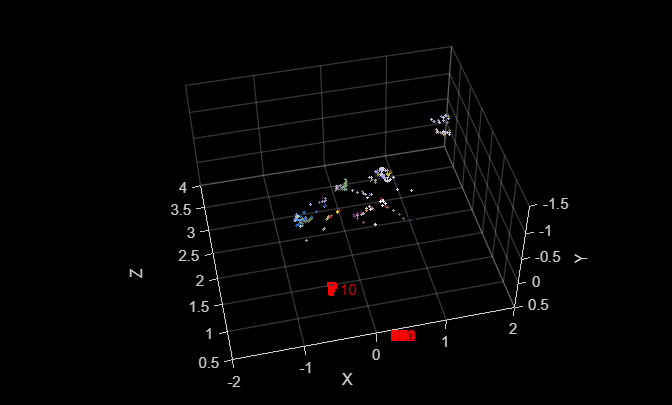

Display the initial 3-D points and the pair of camera poses.

point3dColors = helperGetpoint3dColors(wpSet, 1:wpSet.Count, point2dColors); [ax,cam] = helperInitializePlot(viewGraph, wpSet, point3dColors);

Find Point Tracks Across Views

To ensure reliable triangulation, only use point tracks that are observed in more than two views. Point tracks seen in just two views are provide weaker geometric constraints and are more susceptible to noise. Set the minNumViews variable to 3 to require point tracks are observed in at least 3 views. Increase the value of minNumViews to obtain more robust and stable, but fewer, 3-D points.

minNumViews = 3;

tracks = findTracks(viewGraph, MinTrackLength=minNumViews);

disp(numel(tracks)+" point tracks are found.")9379 point tracks are found.

Process Views to Reconstruct the Scene

Now process the remaining views one by one.

processedViewIds = wpSet.ViewIds;

while numel(processedViewIds) < viewGraph.NumViewsStep 1: Select Next Best View

Select the next best view by minimizing camera-pose uncertainty while maximizing opportunities to extend the reconstructed 3-D structure.

Find Views with Covisible 3-D points

Find the views that share covisible 3-D point with processed views, and get their feature correspondences to known 3-D points.

candidateViews = [];

for i = 1:numel(processedViewIds)

candidateViews = union(candidateViews, ...

setdiff(connectedViews(viewGraph, processedViewIds(i)).ViewId, processedViewIds));

end

if isempty(candidateViews)

break

end

% Find 2-D to 3-D Correspondences in covisible view

correspondences = helperFindCorrespondences(candidateViews, viewGraph, wpSet);Compute Visibility Score

To quantify uncertainty of each candidate view, count the number of reconstructed 3-D points that would be visible from that pose and evaluate how those points are distributed across the image at multiple resolutions. A view that sees more points and exhibits a more uniform spatial coverage across these scales receives a higher score.

scores = zeros(numel(candidateViews),1);

for i = 1:numel(scores)

candidateView = findView(viewGraph, candidateViews(i));

scores(i) = helperVisibilityScore(candidateView.Points{:}.Location(correspondences{i}(:,1),:), intrinsics.ImageSize);

end

% Pick the best view with highest visibility score

[~, idx] = max(scores);

nextViewId = candidateViews(idx);

nextView = findView(viewGraph, nextViewId);

nextViewCorrespondences = correspondences{idx};Step 2: Estimate Camera Pose of Next View

Estimate Absolute Camera Pose

With 2-D to 3-D correspondences in the selected view, estimate the camera pose with the Perspective-n-Point algorithm using estworldpose and refine the camera pose by performing a motion-only bundle adjustment using bundleAdjustmentMotion.

imagePoints = nextView.Points{:}(nextViewCorrespondences(:,1),:).Location;

worldPoints = wpSet.WorldPoints(nextViewCorrespondences(:,2),:);

maxAbsPoseError = 12; % in pixels

% Estimate the camera pose of next view in the world coordinate system

[worldPose, inlierIndex] = estworldpose(imagePoints, worldPoints, intrinsics, ...

MaxReprojectionError=maxAbsPoseError, MaxNumTrials=1e4);

inlierImagePoints=imagePoints(inlierIndex,:);

inlierWorldPoints=worldPoints(inlierIndex,:);

% Update 2-D to 3-D correspondences to get rid of outliers

nextViewCorrespondences = nextViewCorrespondences(inlierIndex,:);

% Perform motion-only bundle adjustment with inliers

refinedPose = bundleAdjustmentMotion(inlierWorldPoints, inlierImagePoints, worldPose, intrinsics,...

PointsUndistorted=true, AbsoluteTolerance=1e-9, RelativeTolerance=1e-16, MaxIterations=25);Update View Graph and World Point Set

Update the view graph with the estimated camera pose.

viewGraph = updateView(viewGraph, nextViewId, refinedPose);

Compute the relative pose between the next view and its connected views and update the connections.

viewTable = connectedViews(viewGraph, nextViewId);

connectedViewId = intersect(processedViewIds, viewTable.ViewId);

for i = 1:numel(connectedViewId)

relPose = rigidtform3d(findView(viewGraph, connectedViewId(i)).AbsolutePose.A\ refinedPose.A);

if hasConnection(viewGraph, connectedViewId(i), nextViewId)

viewGraph = updateConnection(viewGraph, connectedViewId(i), nextViewId, relPose);

else

viewGraph = updateConnection(viewGraph, nextViewId, connectedViewId(i), relPose.invert());

end

endAdd 2-D to 3-D correspondences to the world point set.

wpSet = addCorrespondences(wpSet, nextViewId, nextViewCorrespondences(:,2), nextViewCorrespondences(:,1));

Step 3: Triangulate New 3-D Points

Triangulate new 3-D points from the point tracks that pass through the next view and do not have corresponding 3-D point in the next view.

Find Point Tracks of Next View

Find point tracks that pass through the next view, and extract the segment of the point track that have known camera poses, which are required for multi-view triangulation.

[~, featureIndices] = findWorldPointsInView(wpSet,nextViewId);

untriangulatedTracks = [];

for trackIdx = 1:numel(tracks)

currTrackSegment = tracks(trackIdx);

hasNextView = ismember(nextViewId, currTrackSegment.ViewIds) && ...

~ismember(currTrackSegment.FeatureIndices(currTrackSegment.ViewIds==nextViewId), featureIndices);

if hasNextView

[currTrackSegment.ViewIds, ~, ib] = intersect([processedViewIds, nextViewId], currTrackSegment.ViewIds, "stable");

if numel(ib) >= minNumViews

currTrackSegment.FeatureIndices = currTrackSegment.FeatureIndices(ib);

currTrackSegment.Points = currTrackSegment.Points(ib,:);

untriangulatedTracks = [untriangulatedTracks, currTrackSegment]; %#ok<AGROW>

end

end

endRANSAC-Based Multi-View Triangulation

For each point track, perform multi-view triangulation. Point tracks may contain outliers due to geometric verification errors and ambiguous matches along an epipolar line from previous steps. A single mismatch can merge independent tracks and corrupt triangulation. To prevent this from happening, use ransac and triangulate to select a subset of observations that produce the best triangulation. Use maxReprojectionError and minTriangulationAngle to control the quality of triangulated 3-D points.

maxReprojectionErrorcontrols inlier selection during RANSAC, which affects both the number of observations used and their quality. Lower thresholds produce more accurate points by rejecting noisy observations, while higher thresholds accept more observations but may include outliers.minTriangulationAngleensures sufficient viewing angle between camera pairs to avoid ill-conditioned triangulation where small errors in 2-D observations lead to large errors in 3-D points.

maxReprojectionError = 4.0; % in pixels minTriangulationAngle = 1.5; % in degrees camPoses = poses(viewGraph, [processedViewIds, nextViewId]); for trackIdx = 1:numel(untriangulatedTracks) currTrackSegment = untriangulatedTracks(trackIdx); [xyzPoint, currTrackSegmentNew] = helperTriangulateMultiviewRANSAC(currTrackSegment, ... camPoses, intrinsics, MaxReprojectionError=maxReprojectionError, MinTriangulationAngle=minTriangulationAngle); if isempty(xyzPoint) || ~ismember(nextViewId, currTrackSegmentNew.ViewIds) continue end

Process the Triangulated 3-D Point

For each newly triangulated 3-D point, take one of the following actions:

Add. If none of the views in the point track has corresponding 3-D point, then add it to existing 3-D point set.

Merge. If the point track already has a corresponding 3-D point triangulated from previous views but not the next view, then reproject the existing 3-D point to the next view. If the reprojection error is below

maxReprojectionError, merge the estimate with the existing 3-D point by computing an updated location as a weighted sum.Discard. If the reprojection error exceeds the threshold, discard the newly triangulated 3-D point.

% Check if triangulated points can be merged with existing 3-D points isMerged = false; isDiscarded = false; for j = 1:numel(currTrackSegmentNew.ViewIds) % Check if the track has a known 3-D point viewIdInTrack = currTrackSegmentNew.ViewIds(j); featureIdx = currTrackSegmentNew.FeatureIndices(j); [point3dIdx, point2dIdx] = findWorldPointsInView(wpSet, viewIdInTrack); [hasKnown3DPoint, Lib] = ismember(featureIdx, point2dIdx); if hasKnown3DPoint % Reproject the exiting 3-D point to the current % view and compute reprojection error currViewFeatureIdx = currTrackSegmentNew.FeatureIndices(currTrackSegmentNew.ViewIds==nextViewId); extrinsics = nextView.AbsolutePose.invert(); oldLocation = wpSet.WorldPoints(point3dIdx(Lib),:); xy = world2img(oldLocation, extrinsics, intrinsics); reprojectionError = norm(xy - nextView.Points{:}(currViewFeatureIdx).Location); % If reprojection error is low, merge the existing and the new triangulated 3-D points. % Otherwise, discard the triangulated point and keep the existing one. if reprojectionError < maxReprojectionError % Compute merged 3-D point location as a weighted sum with the number of % views as the weights oldViews = findViewsOfWorldPoint(wpSet, point3dIdx(Lib)); w1 = numel(oldViews); w2 = numel(currTrackSegmentNew.ViewIds); newLocation = (oldLocation * w1 + xyzPoint * w2) / (w1 + w2); % Merge: Update 3-D point location and add 2-D to 3-D correspondence for the next view wpSet = updateWorldPoints(wpSet, point3dIdx(Lib), newLocation); wpSet = addCorrespondences(wpSet, nextViewId, point3dIdx(Lib), currViewFeatureIdx); isMerged = true; break else % Discard isDiscarded = true; break end end end % Add: If the triangulated point is not merged or discarded, add it to existing 3-D points. if ~isMerged && ~isDiscarded [wpSet, pointIndices] = addWorldPoints(wpSet, xyzPoint); % Add 2-D to 3-D correspondences for j = 1:numel(currTrackSegmentNew.ViewIds) viewIdInTrack = currTrackSegmentNew.ViewIds(j); wpSet = addCorrespondences(wpSet, viewIdInTrack, pointIndices, currTrackSegmentNew.FeatureIndices(j)); end % Get color of triangulated points point3dColors(pointIndices,:) = helperGetpoint3dColors(wpSet, pointIndices, point2dColors); end % If a processed view is rejected during multi-view triangulation, remove its % 2-D to 3-D correspondence if the 3-D point is known before triangulation. [viewsRemoved, ia] = setdiff(currTrackSegment.ViewIds, currTrackSegmentNew.ViewIds, "stable"); for k = 1: numel(viewsRemoved) viewIdInTrack = viewsRemoved(k); featureIdx = currTrackSegment.FeatureIndices(ia(k)); [point3dIdx, point2dIdx] = findWorldPointsInView(wpSet, viewIdInTrack); [hasKnown3DPoint, Lib] = ismember(featureIdx,point2dIdx); if hasKnown3DPoint wpSet = removeCorrespondences(wpSet, viewIdInTrack, point3dIdx(Lib)); end end end

Step 4: Iterative Bundle Adjustment and Re-Triangulation

Local Bundle Adjustment

To minimize accumulated errors during incremental SfM, it is essential to integrate bundle adjustment into the reconstruction pipeline after each round of multi-view triangulation. Since each new view primarily affects the local region of the scene, it is usually unnecessary to perform a global optimization after every addition. Instead, apply local bundle adjustment to refine the camera poses of the newly added view, its neighboring connected views that have large triangulation angle, and the 3-D points observed in these views.

% Find the views to be refined [refinedViewIds, fixedViewIds] = helperFindViewToRefine(viewGraph, processedViewIds, nextViewId, wpSet); maxNumIterationsLocal = 2; for k = 1:maxNumIterationsLocal % Perform local bundle adjustment [wpSet, viewGraph, mapPointIdx, reprojectionErrors] = bundleAdjustment(... wpSet, viewGraph, [fixedViewIds; refinedViewIds; nextViewId], intrinsics, FixedViewIDs=fixedViewIds, ... PointsUndistorted=true, AbsoluteTolerance=1e-7, RelativeTolerance=1e-16, ... Solver="preconditioned-conjugate-gradient", MaxIterations=25);

To further enhance the quality of the reconstruction, identify and discard 3-D points with large reprojection errors after bundle adjustment. Bundle adjustment can be repeated until no more outlier points are detected or the maximum number of iterations (maxNumIterationsLocal) is reached. More iterations provides better convergence at the cost of slower reconstruction speed.

isOutlierByError = reprojectionErrors > maxReprojectionError;

if any(isOutlierByError)

% Remove outlier 3-D points

wpSet = removeWorldPoints(wpSet, mapPointIdx(isOutlierByError));

% Remove color of 3-D points

point3dColors(mapPointIdx(isOutlierByError),:) = [];

else

break

endRe-Triangulation

After each local bundle adjustment, repeat multi-view triangulation to recover additional 3-D points and improve the completeness of the reconstructed scene. The refined camera poses from bundle adjustment may enable successful triangulation of points that previously failed due to pose inaccuracies.

% Get 2-D to 3-D correspondences of the next view after triangulation [~, featureIndices] = findWorldPointsInView(wpSet, nextViewId); for i = 1:numel(untriangulatedTracks) currTrackSegment = untriangulatedTracks(i); % Find point tracks that are not triangulated untriangulated = ~ismember(currTrackSegment.FeatureIndices(currTrackSegment.ViewIds==nextViewId), featureIndices); if untriangulated [wpSet,point3dColors] = helperTriangulate(nextView, camPoses, currTrackSegment, intrinsics, wpSet, point3dColors, point2dColors); end end end

Global Bundle Adjustment

Global bundle adjustment jointly refines all camera parameters and 3-D point across the entire scene. It is performed either after processing a substantial batch of views or once all images have been processed. This ensures the overall consistency and optimality of the reconstructed structure. Use batchSize to control the frequency of global bundle adjustment, and maxNumIterationsGlobal to control the convergence quality through iterative refinement.

batchSize = 10;

maxNumIterationsGlobal= 5;

needsGlobalBA = ~mod(numel(processedViewIds), batchSize) || numel(processedViewIds)==viewGraph.NumViews;

if needsGlobalBA

for iter = 1:maxNumIterationsGlobal

[wpSet, viewGraph, mapPointIdx, reprojectionErrors] = bundleAdjustment(...

wpSet, viewGraph, processedViewIds, intrinsics, FixedViewIDs=processedViewIds(1), ...

PointsUndistorted=true, AbsoluteTolerance=1e-7, RelativeTolerance=1e-16, ...

Solver="preconditioned-conjugate-gradient", MaxIterations=50);

isOutlierByError = reprojectionErrors > maxReprojectionError;

if any(isOutlierByError)

wpSet = removeWorldPoints(wpSet, mapPointIdx(isOutlierByError));

point3dColors(mapPointIdx(isOutlierByError), :)=[];

else

break

end

end

end

processedViewIds = [processedViewIds, nextViewId]; %#ok<*AGROW>

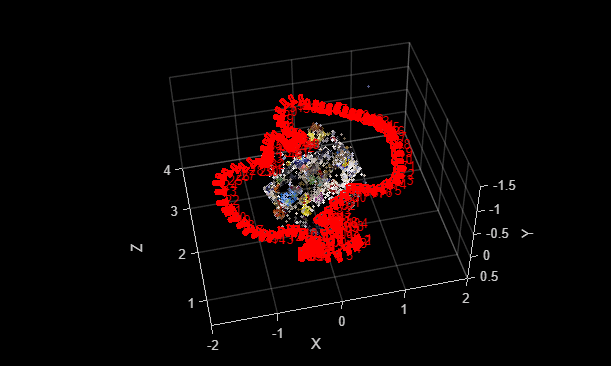

% Display the 3-D points and camera poses

[ax,cam] = helperUpdatePlot(viewGraph, wpSet, point3dColors, ax, cam);

end

disp(wpSet.Count + " out of " + numel(tracks) + " point tracks are successfully triangulated.")

7668 out of 9379 point tracks are successfully triangulated.

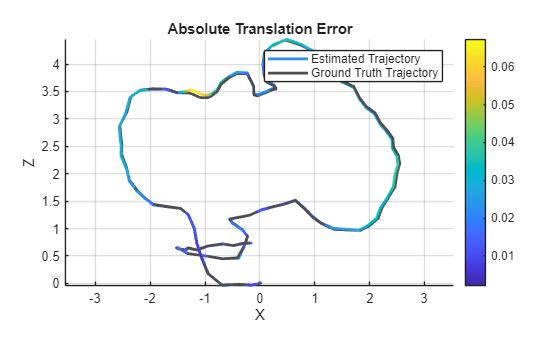

Compare Camera Poses Against Ground Truth

Compare the estimated camera poses with the ground truth poses.

camPoses = poses(viewGraph).AbsolutePose; metrics = compareTrajectories(camPoses, gTruth, AlignmentType="similarity"); figure metrics.plot("absolute-translation") view(0,0)

disp("RMSE of absolute rotation (deg): = " + metrics.AbsoluteRMSE(1));RMSE of absolute rotation (deg): = 1.3285

disp("RMSE of absolute translation (m): = " + metrics.AbsoluteRMSE(2));RMSE of absolute translation (m): = 0.024737

With the estimated camera poses and the sparse 3-D point cloud, you are now ready to perform dense reconstruction of the scene. See following examples for more details on the dense reconstruction workflow.

Dense Reconstruction from Multiple Views using RAFT

Reconstruct 3-D Scenes and Synthesize Novel Views Using Neural Radiance Field Model

Supporting Files

helperFindCorrespondences compute visibility score for each candidate view.

function correspondences = helperFindCorrespondences(candidateViews, viewGraph, wpSet) processedViewIds = wpSet.ViewIds; % Store the feature indices and corresponding map point IDs for each % candidate view correspondences = cell(numel(candidateViews), 1); for i = 1:numel(candidateViews) connViews = connectedViews(viewGraph, candidateViews(i)); % Find the connected views within the processed views for each % candidate view connectedViewIds = intersect(processedViewIds, connViews.ViewId); correspondences{i} = zeros(0, 2); for j = 1:numel(connectedViewIds) % Find the map points observed in the connected view [pointIndices, featureIndices] = findWorldPointsInView(wpSet, connectedViewIds(j)); if hasConnection(viewGraph, candidateViews(i), connectedViewIds(j)) conn = findConnection(viewGraph, candidateViews(i), connectedViewIds(j)); % Find the feature points in the current view with known 3-D % locations obtained from the connected view [~, ia, ib] = intersect(conn.Matches{:}(:,2), featureIndices, "stable"); if ~isempty(ia) correspondences{i} = union(correspondences{i}, [conn.Matches{:}(ia,1), pointIndices(ib)], "rows"); end else conn = findConnection(viewGraph, connectedViewIds(j), candidateViews(i)); % Find the feature points in the current view with known 3-D % locations obtained from the connected view [~, ia, ib] = intersect(conn.Matches{:}(:,1), featureIndices, "stable"); if ~isempty(ia) correspondences{i} = union(correspondences{i}, [conn.Matches{:}(ia,2), pointIndices(ib)], "rows"); end end end [~, firstIdx] = unique(correspondences{i}(:, 2), "stable"); correspondences{i} = correspondences{i}(firstIdx, :); end end

helperVisibilityScore compute visibility score for image points at different resolutions.

function score = helperVisibilityScore(imagePoints, imageSize) height = imageSize(1); width = imageSize(2); numLevels = 6; totalScore = 0; % Calculate scores for each pyramid level for level = 1:numLevels levelScore = (2^level)^2; % Calculate bin size for this level binsX = 2^level; binsY = 2^level; bin_width = width / binsX; bin_height = height / binsY; % Create occupancy grid for this level occupancy = false(binsY, binsX); % Mark occupied bins for i = 1:size(imagePoints, 1) x = imagePoints(i, 1); y = imagePoints(i, 2); % Convert to bin indices (0-based to 1-based) binX = min(floor(x / bin_width) + 1, binsX); binY = min(floor(y / bin_height) + 1, binsY); % Ensure indices are within bounds binX = max(1, min(binX, binsX)); binY = max(1, min(binY, binsY)); occupancy(binY, binX) = true; end % Add contribution from this level numOccupiedBins = sum(occupancy(:)); totalScore = totalScore + numOccupiedBins * levelScore; end score = totalScore; end

helperFindViewToRefine find view to be refined in local bundle adjustment.

function [refinedViewIds, fixedViewIds] = helperFindViewToRefine(viewGraph, processedViewIds, nextViewId, wpSet, args) arguments viewGraph processedViewIds nextViewId wpSet args.MinNumRefinedViews = 5 args.MinTriangulationAngle = 6.0 % in degrees args.MaxNumFixedViews = 5 end [localViewTable, dist] = connectedViews(viewGraph,nextViewId,MaxDistance=2); [localViewIds, ia] = intersect(localViewTable.ViewId, processedViewIds); refinedViewIds = localViewIds(dist(ia)==1); % Exclude views with low parallax thetas75 = zeros(numel(refinedViewIds), 1); [points3dIndices, points2dIndices] = findWorldPointsInView(wpSet, nextViewId); for i = 1:numel(refinedViewIds) if hasConnection(viewGraph,refinedViewIds(i),nextViewId) conn = findConnection(viewGraph,refinedViewIds(i),nextViewId); matches = conn.Matches{:}; else conn = findConnection(viewGraph,nextViewId,refinedViewIds(i)); matches = conn.Matches{:}(:, [2 1]); end [~, ~, ib2] = intersect(matches(:,2), points2dIndices, "stable"); points3d = wpSet.WorldPoints(points3dIndices(ib2), :); % Compute 75% percentile of triangulation angle thetas = helperComputeTriangulationAngle(points3d, poses(viewGraph).AbsolutePose([refinedViewIds(i), nextViewId])); thetas75(i) = quantile(thetas, 0.75); end % Pick views that have large parallax with the next view hasLargeParallax = thetas75>args.MinTriangulationAngle; if nnz(hasLargeParallax) < args.MinNumRefinedViews hasLargeParallax = thetas75>args.MinTriangulationAngle/2; if nnz(hasLargeParallax) < args.MinNumRefinedViews hasLargeParallax = thetas75>args.MinTriangulationAngle/4; end end refinedViewIds = refinedViewIds(hasLargeParallax); fixedViewIds = localViewIds(dist(ia)==2); fixedViewIds = fixedViewIds(1:min(numel(fixedViewIds), args.MaxNumFixedViews)); end

helperTriangulate create new 3-D point using RANSAC-based multi-view triangulation.

function [wpSet,point3dColors] = helperTriangulate(nextView, camPoses, currTrack, intrinsics, wpSet, point3dColors, point2dColors, args) arguments nextView camPoses currTrack intrinsics wpSet point3dColors point2dColors args.MaxReprojectionError = 4 end nextViewId = nextView.ViewId; % After performing ransac-based triangulation, the point tracks are updated % and some tracks may not contain the current view any more. [xyzPoint, currTrackNew] = helperTriangulateMultiviewRANSAC(currTrack, camPoses, intrinsics); if isempty(xyzPoint) || ~ismember(nextViewId, currTrackNew.ViewIds) return end isMerged = false; isDiscarded = false; for j = 1:numel(currTrackNew.ViewIds) viewIdToRemove = currTrackNew.ViewIds(j); featureIdx = currTrackNew.FeatureIndices(j); [point3dIdx, point2dIdx] = findWorldPointsInView(wpSet, viewIdToRemove); [Lia, Lib] = ismember(featureIdx,point2dIdx); % If an image point in the track already has a known 3-D point, % then check if the track is different and can be merged. If not, % then the triangulated 3-D point is contributed by refined current % view pose. The 2-D to 3-D correspondence was rejected when % estimating the current view pose, but now with refined view pose, % the correspondence becomes valid. if Lia currViewFeatureIdx = currTrackNew.FeatureIndices(currTrackNew.ViewIds==nextViewId); extrinsics = nextView.AbsolutePose.invert(); oldLocation = wpSet.WorldPoints(point3dIdx(Lib),:); xy = world2img(oldLocation, extrinsics, intrinsics); reprojectionError = norm(xy - nextView.Points{:}(currViewFeatureIdx).Location); % Do not add the new 3-D point. Just add correspondence % between the existing 3-D point and the current view. if reprojectionError < args.MaxReprojectionError oldViews=findViewsOfWorldPoint(wpSet, point3dIdx(Lib)); w1 = numel(oldViews); w2 = numel(currTrackNew.ViewIds); newLocation = (oldLocation * w1 + xyzPoint * w2) / (w1 + w2); wpSet = updateWorldPoints(wpSet, point3dIdx(Lib), newLocation); wpSet = addCorrespondences(wpSet, nextViewId, point3dIdx(Lib), currViewFeatureIdx); isMerged = true; break else isDiscarded = true; break end end end % If a processed view is rejected during triangulation of a point % track, remove the 2-D to 3-D correspondence if the 3-D point is known % before triangulation. [viewsRemoved, ia] = setdiff(currTrack.ViewIds, currTrackNew.ViewIds, "stable"); for k = 1: numel(viewsRemoved) viewIdToRemove = viewsRemoved(k); featureIdx = currTrack.FeatureIndices(ia(k)); [point3dIdx, point2dIdx] = findWorldPointsInView(wpSet, viewIdToRemove); [Lia, Lib] = ismember(featureIdx,point2dIdx); if Lia wpSet = removeCorrespondences(wpSet, viewIdToRemove, point3dIdx(Lib)); end end if ~isMerged && ~isDiscarded [wpSet, pointIndices] = addWorldPoints(wpSet, xyzPoint); % Add 2-D to 3-D correspondences for j = 1:numel(currTrackNew.ViewIds) viewIdToRemove = currTrackNew.ViewIds(j); wpSet = addCorrespondences(wpSet, viewIdToRemove, pointIndices, currTrackNew.FeatureIndices(j)); end point3dColors(pointIndices,:) = helperGetpoint3dColors(wpSet, pointIndices, point2dColors); end end

helperComputeTriangulationAngle computer triangulation angle of 3-D points for two cameras.

function theta = helperComputeTriangulationAngle(point3Ds, camPosePair) camCenters = [camPosePair(1).Translation; camPosePair(2).Translation]; theta = zeros(size(point3Ds,1),1); for j=1:size(point3Ds,1) rays = point3Ds(j,:) - camCenters; rays = rays ./ vecnorm(rays, 2, 2); cosTheta = dot(rays(1,:), rays(2,:)); cosTheta = max(-1, min(1, cosTheta)); theta(j) = acosd(cosTheta); end end

helperGetpoint3dColors compute color of 3-D points as the average of images that observe the points.

function point3dColors = helperGetpoint3dColors(wpSet, pointIndices, point2dColors) point3dColors = zeros(numel(pointIndices), 3, "uint8"); for i = 1:numel(pointIndices) ptIdx = pointIndices(i); [viewIds, featureIndices] = findViewsOfWorldPoint(wpSet, ptIdx); allColors = zeros(numel(viewIds),3, "uint8"); for idx = 1:numel(viewIds) allColors(idx,:) = point2dColors{viewIds(idx)}(featureIndices(idx), :); end point3dColors(i,:) = mean(allColors); end end

helperInitializePlot display 3-D points and camera poses

function [ax,cam] = helperInitializePlot(viewGraph, wpSet, point3dColors) camPoses = poses(viewGraph); f = figure; f.Position(3:4)=1.2*f.Position(3:4); f.Visible = "on"; ax = axes(f); % Show 3-D points with colors pcshow(wpSet.WorldPoints, point3dColors, Parent=ax, VerticalAxis="Y", ... VerticalAxisDir="Down", MarkerSize=30); hold on % Show camera poses cam = plotCamera(camPoses, Size=0.05, Parent=ax); ax.XLim = [-2, 2]; ax.YLim = [-1.5, 0.5]; ax.ZLim = [0.5, 4]; xlabel("X"); ylabel("Y"); zlabel("Z"); end

helperUpdatePlot update 3-D points and camera poses in the plot

function [ax, cam] = helperUpdatePlot(viewGraph, wpSet, point3dColors, ax, cam) processedViewIds = wpSet.ViewIds; camPoses = poses(viewGraph, processedViewIds).AbsolutePose; % Update camera poses for i = 1:numel(processedViewIds) cam(processedViewIds(i)).AbsolutePose = camPoses(i); end % Update 3-D point locations and colors ax.Children(end).XData=wpSet.WorldPoints(:,1); ax.Children(end).YData=wpSet.WorldPoints(:,2); ax.Children(end).ZData=wpSet.WorldPoints(:,3); ax.Children(end).CData=point3dColors; drawnow limitrate end

See Also

findTracks | pointTrack | estworldpose | bundleAdjustmentMotion | ransac | triangulate | bundleAdjustment | findWorldPointsInView | compareTrajectories