selectModels

Class: ClassificationLinear

Choose subset of regularized, binary linear classification models

Description

SubMdl = selectModels(Mdl,idx)Mdl) trained

using various regularization strengths. The indices (idx)

correspond to the regularization strengths in Mdl.Lambda,

and specify which models to return.

Input Arguments

Output Arguments

Examples

Tips

One way to build several predictive, binary linear classification models is:

Hold out a portion of the data for testing.

Train a binary, linear classification model using

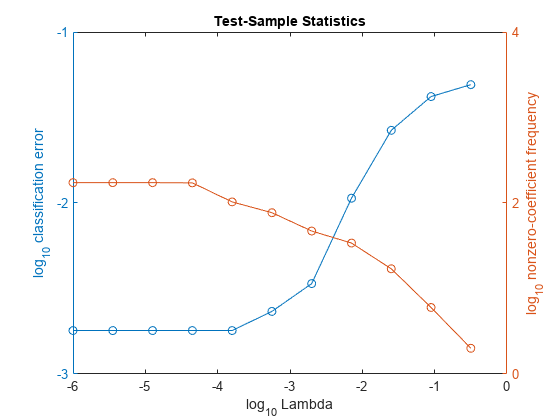

fitclinear. Specify a grid of regularization strengths using the'Lambda'name-value pair argument and supply the training data.fitclinearreturns oneClassificationLinearmodel object, but it contains a model for each regularization strength.To determine the quality of each regularized model, pass the returned model object and the held-out data to, for example,

loss.Identify the indices (

idx) of a satisfactory subset of regularized models, and then pass the returned model and the indices toselectModels.selectModelsreturns oneClassificationLinearmodel object, but it containsnumel(idx)regularized models.To predict class labels for new data, pass the data and the subset of regularized models to

predict.