이 번역 페이지는 최신 내용을 담고 있지 않습니다. 최신 내용을 영문으로 보려면 여기를 클릭하십시오.

적응형 크루즈 컨트롤을 위해 DDPG 에이전트 훈련시키기

이 예제에서는 Simulink®에서 DDPG(심층 결정적 정책 경사법) 에이전트에게 ACC(적응형 크루즈 컨트롤)를 훈련시키는 방법을 보여줍니다. DDPG 에이전트에 대한 자세한 내용은 DDPG(심층 결정적 정책 경사) 에이전트 항목을 참조하십시오.

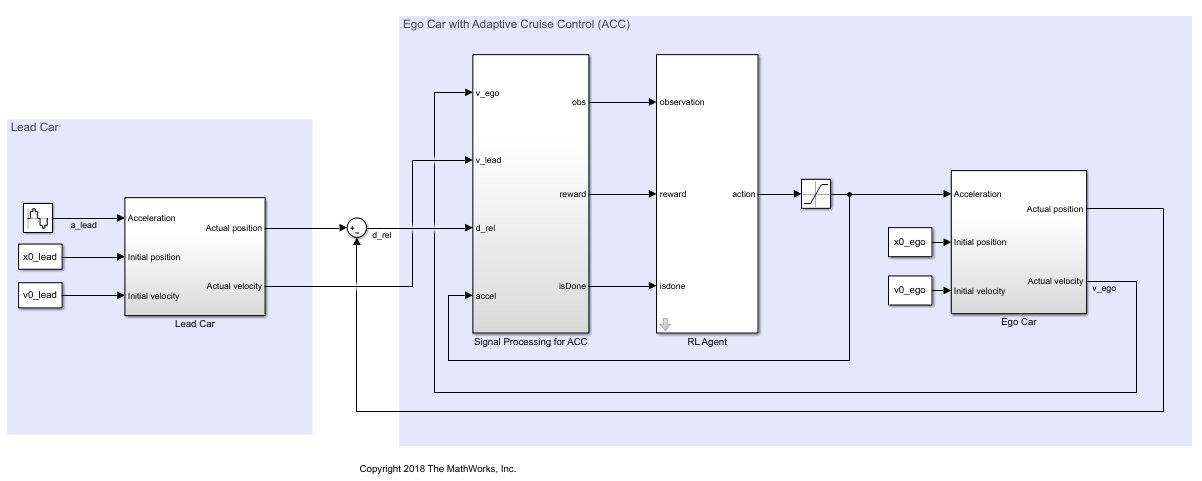

Simulink 모델

이 예제의 강화 학습 환경은 자기 차량(ego car)과 선행 차량에 대한 간단한 종방향 동역학입니다. 훈련 목표는 자기 차량이 종방향으로의 가속과 제동을 제어하여 선행 차량과의 안전 거리를 유지하면서 설정 속도로 주행하도록 하는 것입니다. 이 예제에서는 Adaptive Cruise Control System Using Model Predictive Control (Model Predictive Control Toolbox) 예제와 동일한 차량 모델을 사용합니다.

두 차량의 초기 위치와 속도를 지정합니다.

x0_lead = 50; % initial position for lead car (m) v0_lead = 25; % initial velocity for lead car (m/s) x0_ego = 10; % initial position for ego car (m) v0_ego = 20; % initial velocity for ego car (m/s)

정지 상태의 디폴트 간격(m), 시간차(s) 및 운전자 설정 속도(m/s)를 지정합니다.

D_default = 10; t_gap = 1.4; v_set = 30;

차량 동특성의 물리적 한계를 시뮬레이션하기 위해 가속 범위를 [–3,2]m/s^2으로 제한합니다.

amin_ego = -3; amax_ego = 2;

샘플 시간 Ts와 시뮬레이션 지속 시간 Tf를 초 단위로 정의합니다.

Ts = 0.1; Tf = 60;

모델을 엽니다.

mdl = "rlACCMdl"; open_system(mdl) agentblk = mdl + "/RL Agent";

이 모델의 경우 다음이 적용됩니다.

에이전트에서 환경으로 전달되는 가속 행동 신호는 –3m/s^2에서 2m/s^2까지입니다.

자기 차량의 기준 속도 는 다음과 같이 정의됩니다. 상대 거리가 안전 거리보다 짧으면 자기 차량은 선행 차량 속도와 운전자 설정 속도의 최솟값을 추종합니다. 이 같은 방식으로 자기 차량은 선행 차량과의 거리를 유지합니다. 상대 거리가 안전 거리보다 길면 자기 차량은 운전자 설정 속도를 추종합니다. 이 예제에서 안전 거리는 자기 차량 종방향 속도 의 선형 함수로 정의됩니다. 즉, 입니다. 이 안전 거리가 자기 차량의 기준 추종 속도를 결정합니다.

환경에서 관측하는 값은 속도 오차 , 적분 , 자기 차량 종방향 속도 입니다.

자기 차량의 종방향 속도가 0보다 작거나 선행 차량과 자기 차량 간의 상대 거리가 0보다 작아지면 시뮬레이션이 종료됩니다.

매 시간 스텝 마다 제공되는 보상 는 다음과 같습니다.

여기서 은 이전 시간 스텝의 제어 입력입니다. 속도 오차 인 경우 논리값 이고, 그 외의 경우에는 입니다.

환경 인터페이스 만들기

모델에 대한 강화 학습 환경 인터페이스를 만듭니다.

관측값 사양을 만듭니다.

obsInfo = rlNumericSpec([3 1], ... LowerLimit=-inf*ones(3,1), ... UpperLimit=inf*ones(3,1)); obsInfo.Name = "observations"; obsInfo.Description = ... "velocity error and ego velocity";

행동 사양을 만듭니다.

actInfo = rlNumericSpec([1 1], ... LowerLimit=-3,UpperLimit=2); actInfo.Name = "acceleration";

환경 인터페이스를 만듭니다.

env = rlSimulinkEnv(mdl,agentblk,obsInfo,actInfo);

선행 차량 위치에 대한 초기 조건을 정의하려면 익명 함수 핸들을 사용하여 환경 재설정 함수를 지정하십시오. 예제 끝에 정의된 재설정 함수 localResetFcn은 선행 차량의 초기 위치를 무작위로 할당합니다.

env.ResetFcn = @(in)localResetFcn(in);

재현이 가능하도록 난수 생성기 시드값을 고정합니다.

rng("default")DDPG 에이전트 만들기

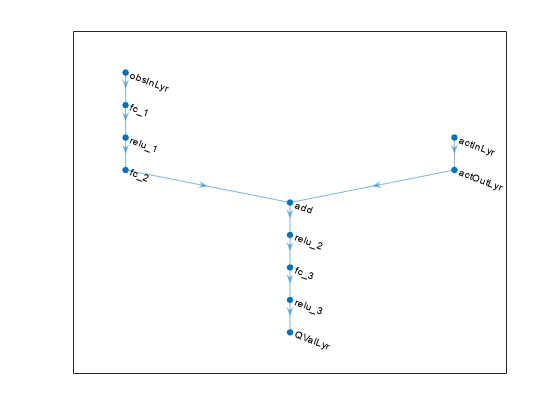

DDPG 에이전트는 파라미터화된 Q-값 함수 크리틱을 사용하여 정책의 값을 추정합니다. Q-값 함수는 현재 관측값과 행동을 입력값으로 받고 single형 스칼라를 출력값(현재 관측값에 해당하는 상태로부터 행동을 받고 그 후 정책을 따를 때 추정되는 감가된 누적 장기 보상)으로 반환합니다.

크리틱 내에서 파라미터화된 Q-값 함수를 모델링하려면 두 개의 입력 계층(obsInfo로 지정된 대로 관측값 채널에 대한 입력 계층 및 actInfo로 지정된 대로 행동 채널에 대한 입력 계층)과 (스칼라 값을 반환하는) 하나의 출력 계층을 갖는 신경망을 사용하십시오.

각 신경망 경로를 layer 객체로 구성된 배열로 정의합니다. prod(obsInfo.Dimension)과 prod(actInfo.Dimension)을 사용하여 관측값 공간과 행동 공간의 차원 수를 반환합니다.

각 경로의 입력 계층과 출력 계층에 이름을 할당합니다. 이러한 이름을 사용하면 경로를 연결한 다음 나중에 신경망 입력 계층 및 출력 계층을 적절한 환경 채널과 명시적으로 연결할 수 있습니다.

L = 48; % number of neurons % Main path mainPath = [ featureInputLayer( ... prod(obsInfo.Dimension), ... Name="obsInLyr") fullyConnectedLayer(L) reluLayer fullyConnectedLayer(L) additionLayer(2,Name="add") reluLayer fullyConnectedLayer(L) reluLayer fullyConnectedLayer(1,Name="QValLyr") ]; % Action path actionPath = [ featureInputLayer( ... prod(actInfo.Dimension), ... Name="actInLyr") fullyConnectedLayer(L,Name="actOutLyr") ]; % Assemble layers into a layergraph object criticNet = layerGraph(mainPath); criticNet = addLayers(criticNet, actionPath); % Connect layers criticNet = connectLayers(criticNet,"actOutLyr","add/in2"); % Convert to dlnetwork and display number of weights criticNet = dlnetwork(criticNet); summary(criticNet)

Initialized: true

Number of learnables: 5k

Inputs:

1 'obsInLyr' 3 features

2 'actInLyr' 1 features

크리틱 신경망 구성을 확인합니다.

plot(criticNet)

criticNet, 환경 관측값 및 행동 사양, 그리고 환경 관측값 채널 및 행동 채널과 연결할 신경망 입력 계층의 이름을 사용하여 크리틱 근사기 객체를 만듭니다. 자세한 내용은 rlQValueFunction 항목을 참조하십시오.

critic = rlQValueFunction(criticNet,obsInfo,actInfo,... ObservationInputNames="obsInLyr",ActionInputNames="actInLyr");

DDPG 에이전트는 연속 행동 공간에 대해 연속 결정적 액터가 학습한 파라미터화된 결정적 정책을 사용합니다. 이 액터는 현재 관측값을 입력값으로 받고 관측값의 결정적 함수인 행동을 출력값으로 반환합니다.

액터 내에서 파라미터화된 정책을 모델링하려면 (obsInfo로 지정된 대로 환경 관측값 채널의 내용을 받는) 하나의 입력 계층과 (actInfo로 지정된 대로 환경 행동 채널에 행동을 반환하는) 하나의 출력 계층을 갖는 신경망을 사용하십시오.

신경망을 layer 객체로 구성된 배열로 정의합니다. tanhLayer 다음에 scalingLayer를 사용하여 신경망 출력값을 행동 범위로 스케일링합니다.

actorNet = [

featureInputLayer(prod(obsInfo.Dimension))

fullyConnectedLayer(L)

reluLayer

fullyConnectedLayer(L)

reluLayer

fullyConnectedLayer(L)

reluLayer

fullyConnectedLayer(prod(actInfo.Dimension))

tanhLayer

scalingLayer(Scale=2.5,Bias=-0.5)

];dlnetwork로 변환하고 가중치 개수를 표시합니다.

actorNet = dlnetwork(actorNet); summary(actorNet)

Initialized: true

Number of learnables: 4.9k

Inputs:

1 'input' 3 features

actorNet과 관측값 및 행동 사양을 사용하여 액터를 만듭니다. 연속 결정적 액터에 대한 자세한 내용은 rlContinuousDeterministicActor 항목을 참조하십시오.

actor = rlContinuousDeterministicActor(actorNet, ...

obsInfo,actInfo);rlOptimizerOptions를 사용하여 크리틱과 액터에 대한 훈련 옵션을 지정합니다.

criticOptions = rlOptimizerOptions( ... LearnRate=1e-3, ... GradientThreshold=1, ... L2RegularizationFactor=1e-4); actorOptions = rlOptimizerOptions( ... LearnRate=1e-4, ... GradientThreshold=1, ... L2RegularizationFactor=1e-4);

rlDDPGAgentOptions를 사용하여 DDPG 에이전트 옵션을 지정하고 액터와 크리틱의 훈련 옵션을 포함시킵니다.

agentOptions = rlDDPGAgentOptions(... SampleTime=Ts,... ActorOptimizerOptions=actorOptions,... CriticOptimizerOptions=criticOptions,... ExperienceBufferLength=1e6);

점 표기법을 사용하여 에이전트 옵션을 설정하거나 수정할 수도 있습니다.

agentOptions.NoiseOptions.Variance = 0.6; agentOptions.NoiseOptions.VarianceDecayRate = 1e-5;

또는 먼저 에이전트를 만든 다음, 에이전트의 option 객체에 액세스하고 점 표기법을 사용하여 옵션을 수정할 수 있습니다.

지정된 액터 표현, 크리틱 표현, 에이전트 옵션을 사용하여 DDPG 에이전트를 만듭니다. 자세한 내용은 rlDDPGAgent 항목을 참조하십시오.

agent = rlDDPGAgent(actor,critic,agentOptions);

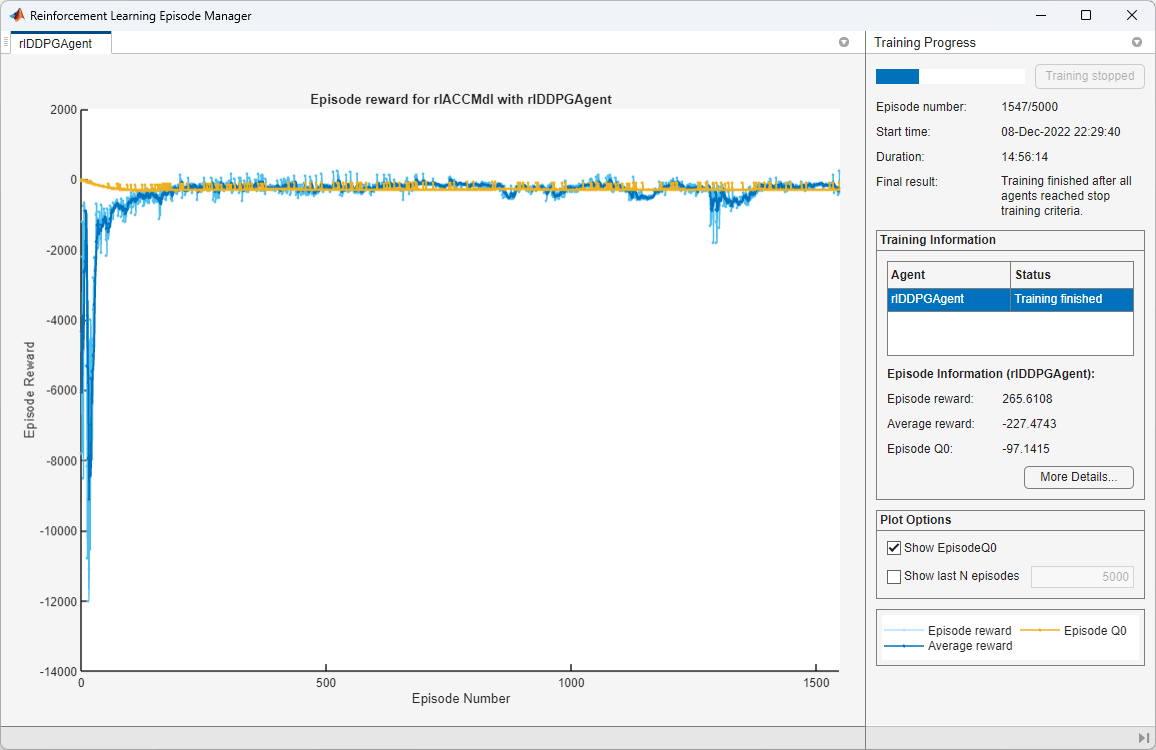

에이전트 훈련시키기

에이전트를 훈련시키려면 먼저 훈련 옵션을 지정하십시오. 이 예제에서는 다음 옵션을 사용합니다.

최대

5000개의 에피소드에 대해 훈련을 실행하며, 각 에피소드마다 최대 600개의 시간 스텝이 지속됩니다.에피소드 관리자 대화 상자에 훈련 진행 상황을 표시합니다.

에이전트가 받은 에피소드 보상이 260보다 클 때 훈련을 중지합니다.

자세한 내용은 rlTrainingOptions 항목을 참조하십시오.

maxepisodes = 5000; maxsteps = ceil(Tf/Ts); trainingOpts = rlTrainingOptions(... MaxEpisodes=maxepisodes,... MaxStepsPerEpisode=maxsteps,... Verbose=false,... Plots="training-progress",... StopTrainingCriteria="EpisodeReward",... StopTrainingValue=260);

train 함수를 사용하여 에이전트를 훈련시킵니다. 훈련은 완료하는 데 수 분이 소요되는 계산 집약적인 절차입니다. 이 예제를 실행하는 동안 시간을 절약하려면 doTraining을 false로 설정하여 사전 훈련된 에이전트를 불러오십시오. 에이전트를 직접 훈련시키려면 doTraining을 true로 설정하십시오.

doTraining = false; if doTraining % Train the agent. trainingStats = train(agent,env,trainingOpts); else % Load a pretrained agent for the example. load("SimulinkACCDDPG.mat","agent") end

DDPG 에이전트 시뮬레이션하기

훈련된 에이전트의 성능을 검증하려면 다음 명령을 사용하여 Simulink 환경 내에서 에이전트를 시뮬레이션할 수 있습니다. 에이전트 시뮬레이션에 대한 자세한 내용은 rlSimulationOptions 항목과 sim 항목을 참조하십시오.

simOptions = rlSimulationOptions(MaxSteps=maxsteps); experience = sim(env,agent,simOptions);

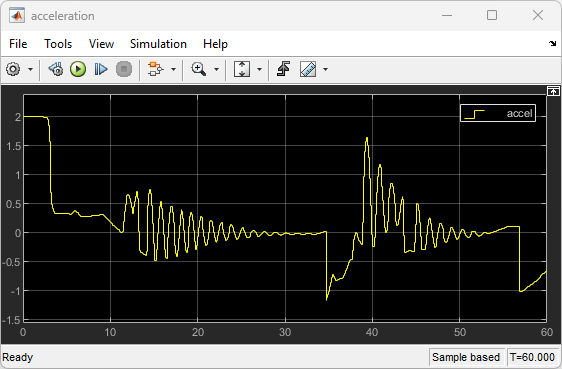

결정적 초기 조건을 사용하여 훈련된 에이전트를 시연하려면 Simulink에서 모델을 시뮬레이션하십시오.

x0_lead = 80; sim(mdl)

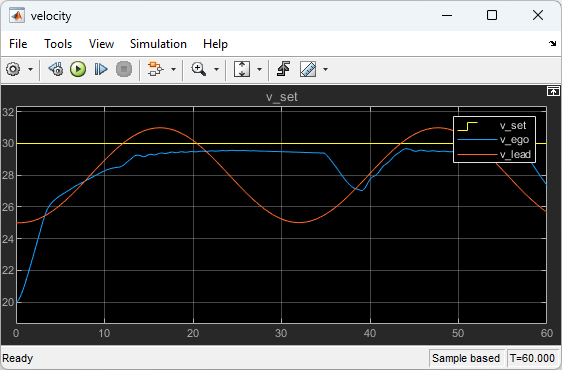

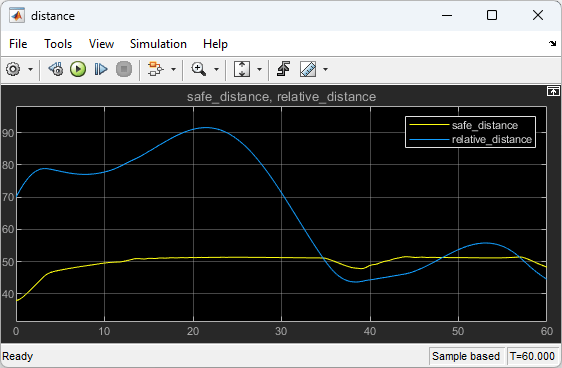

다음 플롯은 선행 차량이 자기 차량보다 70(m) 앞설 때의 시뮬레이션 결과를 보여줍니다.

처음 35초 동안에는 상대 거리가 안전 거리(아래쪽 플롯)보다 크기 때문에 자기 차량이 설정 속도(중간 플롯)를 추종합니다. 속도를 높여 설정 속도에 도달하기 위해 가속이 처음에는 양수입니다(위쪽 플롯).

35초부터 48초까지는 상대 거리가 안전 거리(아래쪽 플롯)보다 작기 때문에 자기 차량이 선행 차량 속도의 최솟값과 설정 속도를 추종합니다. 속도를 낮춰 선행 차량 속도를 추종하기 위해 35초부터 약 37초까지 가속이 음수로 바뀝니다(위쪽 플롯). 그 후, 자기 차량은 상대 거리를 안전 거리로 간주할 수 있는지 여부에 따라 선행 차량 속도와 설정 속도 사이의 최솟값 또는 설정 속도를 계속 추종하기 위해 가속을 조정합니다.

Simulink 모델을 닫습니다.

bdclose(mdl)

재설정 함수

function in = localResetFcn(in) % Reset the initial position of the lead car. in = setVariable(in,"x0_lead",40+randi(60,1,1)); end

참고 항목

함수

train|sim|rlSimulinkEnv

객체

rlDDPGAgent|rlDDPGAgentOptions|rlQValueFunction|rlContinuousDeterministicActor|rlTrainingOptions|rlSimulationOptions|rlOptimizerOptions