이 제출물을 팔로우합니다

- 팔로우하는 게시물 피드에서 업데이트를 확인할 수 있습니다

- 정보 수신 기본 설정에 따라 이메일을 받을 수 있습니다

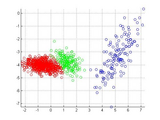

This is the variational Bayesian inference method for Gaussian mixture model. Unlike the EM algorithm (maximum likelihood estimation), it can automatically determine the number of the mixture components k. Please try following code for a demo:

close all; clear;

d = 2;

k = 3;

n = 2000;

[X,z] = mixGaussRnd(d,k,n);

plotClass(X,z);

m = floor(n/2);

X1 = X(:,1:m);

X2 = X(:,(m+1):end);

% VB fitting

[y1, model, L] = mixGaussVb(X1,10);

figure;

plotClass(X1,y1);

figure;

plot(L)

% Predict testing data

[y2, R] = mixGaussVbPred(model,X2);

figure;

plotClass(X2,y2);

The data set is of 3 clusters. You only need to set a number (say 10) which is larger than the intrinsic number of clusters. The algorithm will automatically find the proper k.

Detail description of the algorithm can be found in the reference.

Pattern Recognition and Machine Learning by Christopher M. Bishop (P.474)

Upon the request, I provided the prediction function for out-of-sample inference.

This function is now a part of the PRML toolbox (http://www.mathworks.com/matlabcentral/fileexchange/55826-pattern-recognition-and-machine-learning-toolbox).

인용 양식

Mo Chen (2026). Variational Bayesian Inference for Gaussian Mixture Model (https://kr.mathworks.com/matlabcentral/fileexchange/35362-variational-bayesian-inference-for-gaussian-mixture-model), MATLAB Central File Exchange. 검색 날짜: .

| 버전 | 퍼블리시됨 | 릴리스 정보 | Action |

|---|---|---|---|

| 1.0.0.0 | added prediction function, greatly simplified the code |